|

|

|

2019

July

28

|

Changing churches

My Christian readers may be interested to know that, after a long and prayerful

search, Melody and I have moved our church membership to

Alps Road Presbyterian Church.

For the first time in our lives, we are not Baptists.

It would not be correct to say we have "converted to Presbyterianism."

The only doctrinal change is that we accept that infant baptism is valid when

reaffirmed by the baptized person at a more mature age.

(Baptists do not baptize infants.)

What about predestination? That's what you've probably heard is distinctive

about Presbyterians, but actually it's not.

The Bible uses the term, and there's a spectrum of opinion about what it means.

We are not hard-line Calvinists, and neither are most of the Presbyterians we know.

The Calvinist doctrine that I most eagerly affirm is that God is the cause of our

salvation; it is not caused by our actions or the actions of those who evangelize us.

That doesn't mean we don't evangelize; we are glad to participate in God's work.

But we don't take credit for the results.

Alps Road is an ECO Presbyterian church (Evangelical Covenant Order), more doctrinally

conservative and with more congregational autonomy than the PCUSA (the largest Presbyterian

denomination in this country), but less tied to Presbyterian history and distinctives

than the more conservative PCA.

But doctrine is not why we moved; we didn't disagree with our previous church.

In particular, we did not have a falling out with the Southern Baptist Convention; we

still admire it as an organization, especially its

avoidance of political entanglements and the good work that

comes from its Ethics and Religious Liberty Commission.

The things that drew us to Alps Road are:

- A focused worship style that uses traditional (including classical)

music and a semi-liturgical format,

so that the elements of worship (confession, adoration, etc.) are always present and

easy to follow.

- Awareness of, and respect for, church history. As children of the Reformation,

Presbyterians believe that the Church underwent a major

correction in the sixteenth century, but both beforehand and afterward, it was really the church.

Among Baptists, that view is common but competes with another,

which is that the Church deviated from the Bible soon after the apostolic era,

became largely ineffective, and

did not return to true Christianity until recent centuries.

- A realistic attitude toward the educational level of the congregation.

I don't mean all the sermons are postgraduate level, or anything like that.

But other churches often teach on a sub-college level even when everybody in the

room is college-educated or college-bound.

This is probably a holdover from the way things were done 50 years ago,

when many members were less educated.

The teaching in such a church need not be vacuous;

it is often deep, but there's too little engagement with general

knowledge of the real world, and college-bound students can get the impression

that Christianity has nothing to say about anything they learned

after eighth grade.

- Little practical things: the details of the services are available on the Web

(without having to watch a whole video), which enabled us to recognize the worship style

before actually visiting. (Sample here.) And the length of the service

is carefully managed so it finishes after one hour. That's important to people with

hip or spine problems, such as Melody. Some people can sit for an hour but not a vague

open-ended hour and a half.

American evangelical Christianity has undergone some major changes in the past decade or two,

and, frankly, some of them left us behind.

We have of course had a period of unprecedented confusion between religion and politics.

I am happy to say that all of the churches we've attended have done a good job of keeping

secular politics out of the sanctuary.

The other major change, coming in with the megachurch movement, was the widespread change

to "all-contemporary worship," throwing out the hymnals and singing only recently composed music

performed by a "praise band."

We are not old geezers who can't stand new music. Some new music is excellent and should

be accepted on its merits. But we aren't willing to throw out all the old music

and turn the whole church into a 1970s-style youth program.

Often, youth program is exactly what it is — the first thing people say is that

old music would scare away young people and potential converts.

Whether this is true is untested, and anyhow, the purpose of a church service is not

just to attract people who are not there, but also to edify those who are.

We are happy to have contemporary worship as an alternative or special program (Alps Road does),

but not to throw out traditional worship and music entirely, as so many churches are doing.

A church that throws out Bach and Handel and "Amazing Grace" and "Holy, Holy, Holy" has, we feel,

cut loose from its heritage in a dangerous way.

At the same time, we recognize that, for many people, contemporary worship filled a vacuum.

Many evangelical churches, making an undue effort to keep things

plain and simple, had developed, in the 20th Century, a style of worship that

could only be described as boring. Contemporary worship lifted them out of that, into a style

where the elements of worship were more clearly present, and there was more focus and less distraction.

But that was not the only possible solution; the alternative was to reconnect to tradition

and use knowledge the church already had;

and that's what we've done.

Permanent link to this entry

|

2019

July

27

|

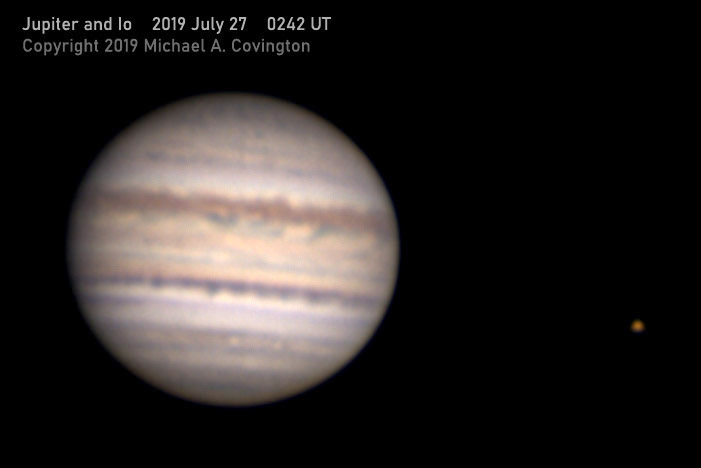

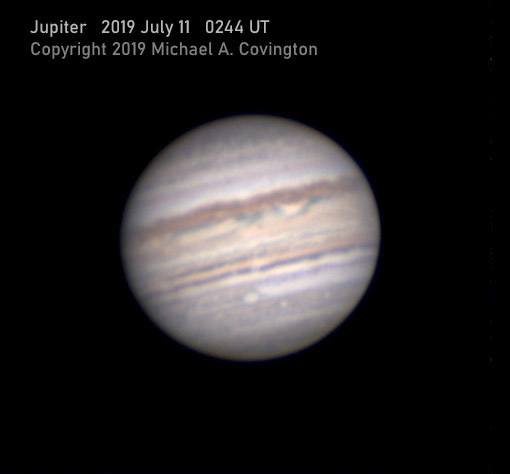

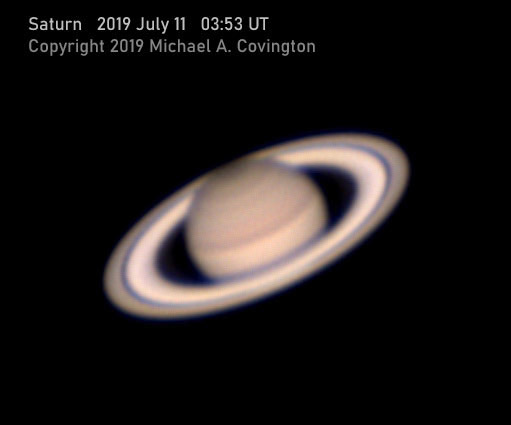

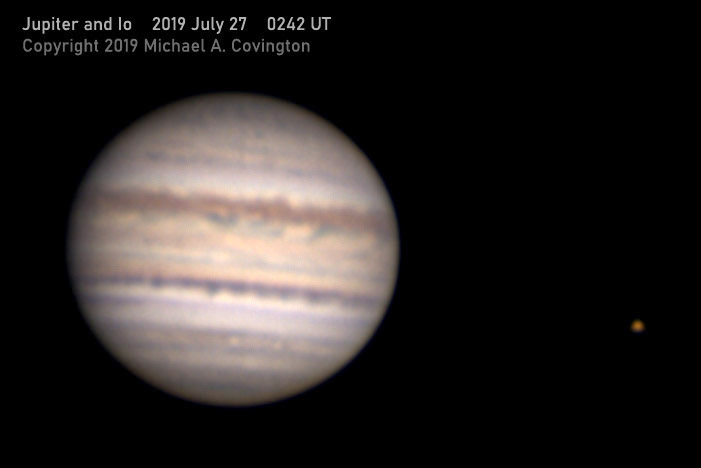

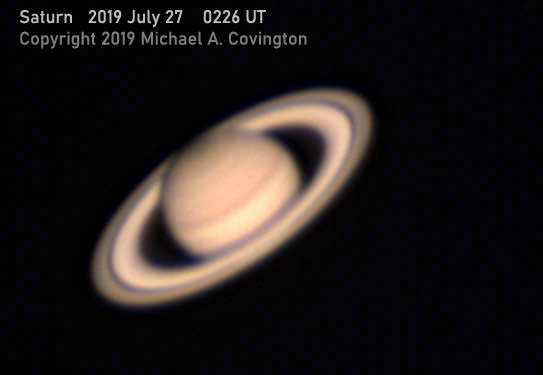

Last night's planets

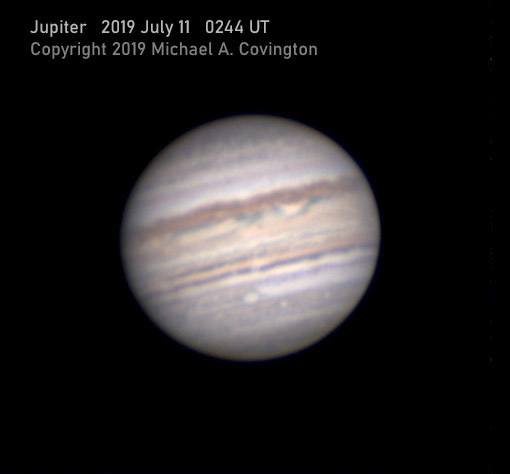

Compare to the pictures I took on July 11.

Same equipment and method, looking at the same side of Jupiter.

You can see that some features on Jupiter have changed and some haven't.

Permanent link to this entry

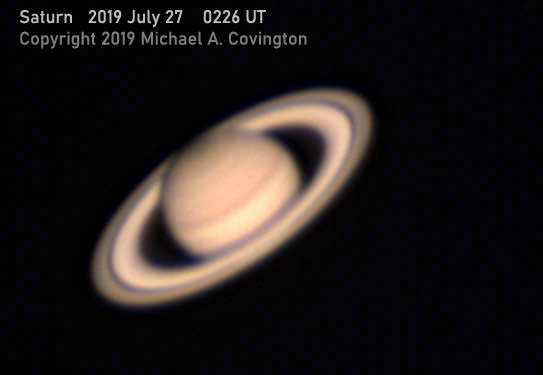

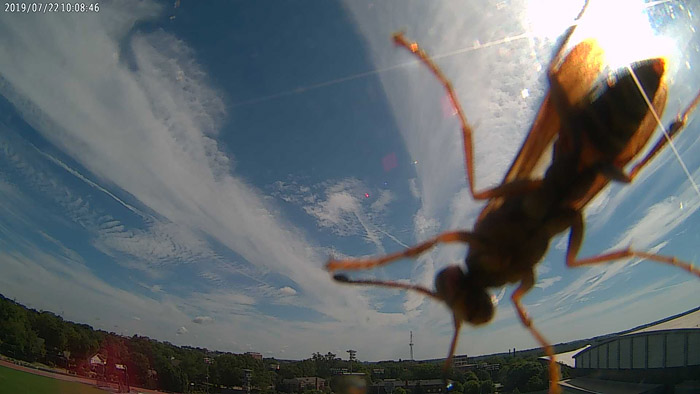

Waspzilla attacks

This image, from the University's Butts-Mehre weather camera, has amused

a lot of people recently...

Permanent link to this entry

|

2019

July

25

|

Cygnus in hydrogen-alpha

This is the field of Gamma Cygni (click here for another image)

photographed an unusual way.

I used a Lumicon Hydrogen-Alpha filter, which is a red dye filter that only transmits

wavelengths longer than 640 nm, combined with my modified Nikon D5500 and Sigma 105-mm

f/2.8 lens at f/4. This is a stack of ten 4-minute exposures, using only the red pixels

and treating the result as a monochrome image. The nebulae stand out because they glow

at a wavelength of 656 nm, and the picture only covers about 640 to 700 nm, rejecting most

light from other sources.

What you see are the stars of the Milky Way, some glowing hydrogen nebulae, and some

dark nebulae obscuring the background. I was delighted to be able to take this picture

in town, at my home, under skies that were clear but none too dark because of city lights.

Permanent link to this entry

And the North America Nebula...

Here's the North America Nebula done the same way.

Again, I'm delighted that I got this much; in a really dark sky I would have

been able to photograph more.

Permanent link to this entry

Happy 37th anniversary, Melody!

I tell people we're still newlyweds — it's been less than a century.

3700 more years would not be enough!

Permanent link to this entry

|

2019

July

23

|

A surprising discovery about

Celestron CGEM clutch knobs

Unpaint them!

The black paint on the clutch knobs of my Celestron CGEM was scuffing off at the edges,

and I wanted the knobs to be more visible anyhow, so a couple of years ago, I repainted

them orange, with poor results. I suspected the inner coat of paint was loose, so I decided

to remove all the paint. Over the past few days I gave the knobs 24 hours in a jar of

paint thinner, followed by a lot of wire brushing, and made a surprising discovery...

The knobs are not, as I thought, aluminum.

They are a slightly warm-colored, silvery, non-magnetic metal with a density of

about 7.5 g/mL (my own rough measurement).

Unless someone comes up with a more exotic theory, I think they are stainless steel,

which is notoriously hard to paint, and never should have been painted.

So I finished the wire brushing, followed up with a finer wire brush on a Dremel and

then a buffing wheel, and ended up with knobs that look like chromed motorcycle parts.

I'll leave them unpainted!

Permanent link to this entry

|

2019

July

22

|

Covington's Razor

| Never attribute to incompetence what can be adequately explained by bad management higher up. |

This is analogous to Hanlon's Razor which in

turn is named after Occam's Razor — all three of them are

principles for trimming away needless explanations.

It's a truism of the business world, and having experienced an instance of it

(in a store), I expressed it concisely on Facebook, someone named it Covington's Razor,

and it started to spread.

Permanent link to this entry

|

2019

July

21

|

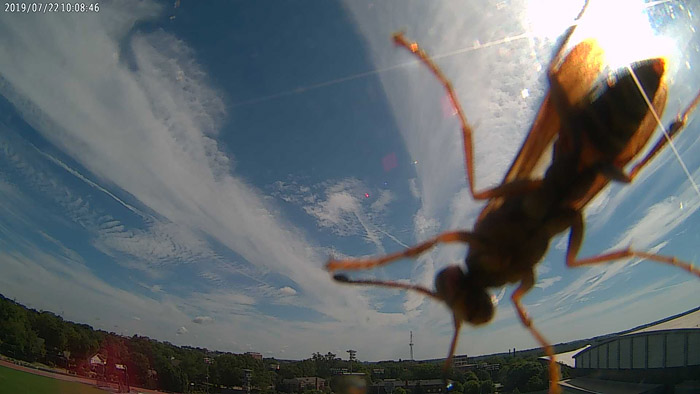

A farewell to TTL

And how I first heard the sound of the 1980s

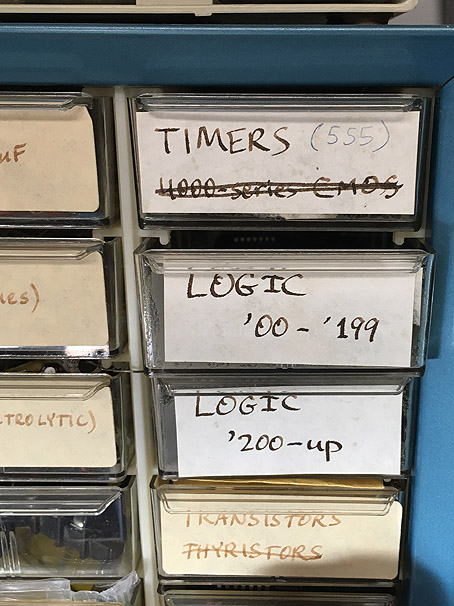

I'm modernizing my electronics workshop, and a couple of evenings ago I moved a lot

of obsolete parts to back storage. That involved emptying several drawers of TTL integrated

circuits — and it was a poignant moment when I realized that I was removing some

things from a drawer they had been in since 1982, in a blue cabinet that I had bought when

Melody and I were newlyweds.

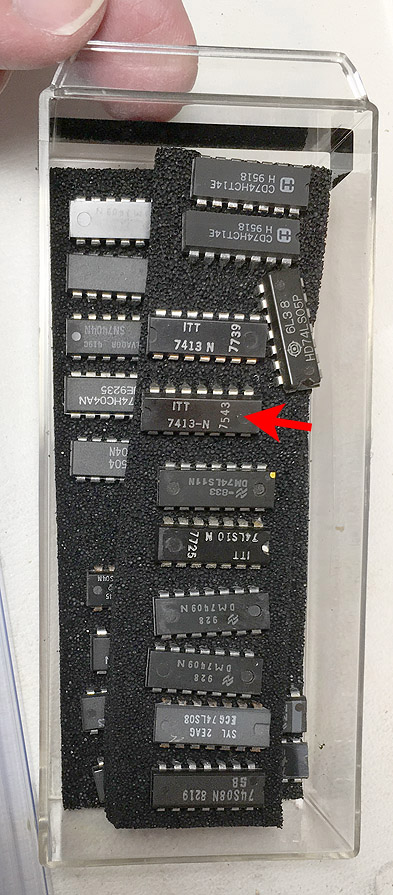

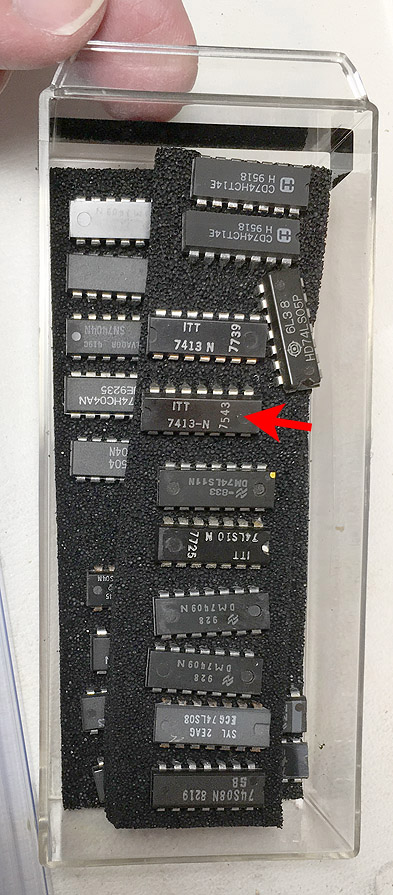

Here are the contents of a couple of the drawers. Of course the newer 74HC ICs that were

mixed in will stay (and have taken over one of the original 1982 logic-chip drawers).

But one of the old chips has a 1975 date code! Notice also the stick-on labels,

called "bug tags" and sold to hobbyists in the early 1980s.

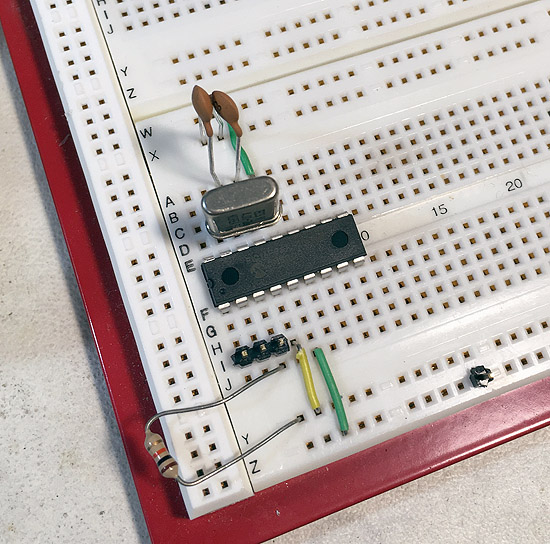

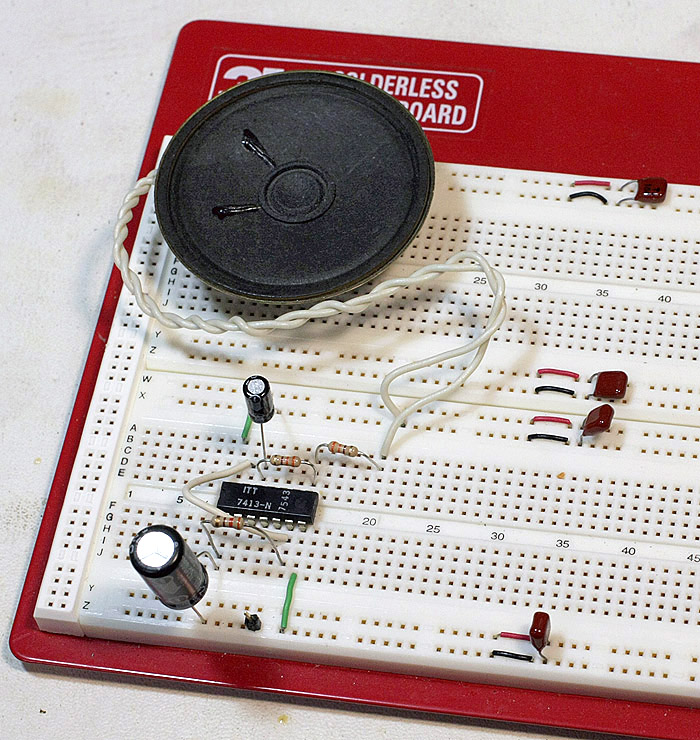

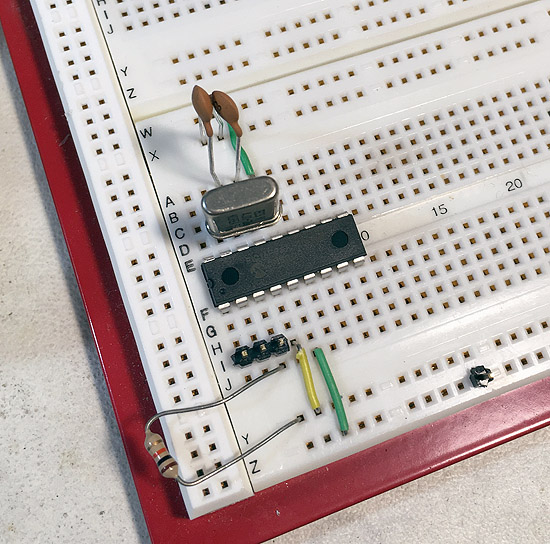

Before putting all the TTL chips away, I decided to replicate (with the very oldest one,

of course) the first TTL circuit I ever breadboarded.

My first contact with TTL came while visiting Arthur Christian

at his parents' home in Cheshire during Easter vacation from Cambridge in 1978.

Arthur was doing an engineering degree and had brought some breadboards, components,

and a power supply home from school.

I knew the theory of logic circuits but had never used them hands-on.

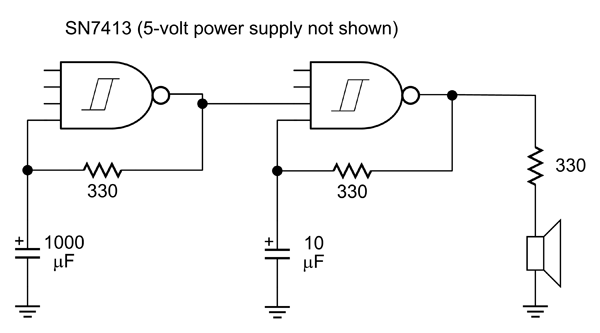

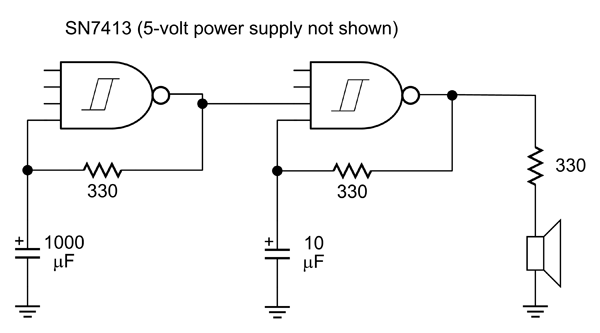

He let me experiment with his, and I breadboarded this circuit (as best I can remember it):

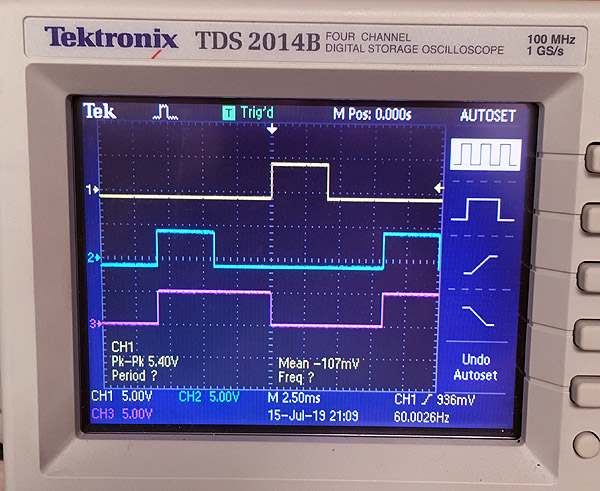

It's a fast oscillator gated by a slow oscillator, driving a speaker.

Those unfamiliar with TTL will be surprised how small the resistors have to be

(necessitating large, expensive capacitors),

and also by the fact that it's OK to leave inputs unconnected (they count as logic 1).

But at the time, this circuit impressed me; doing it with discrete parts would have

required four transistors, four capacitors, and about a dozen resistors.

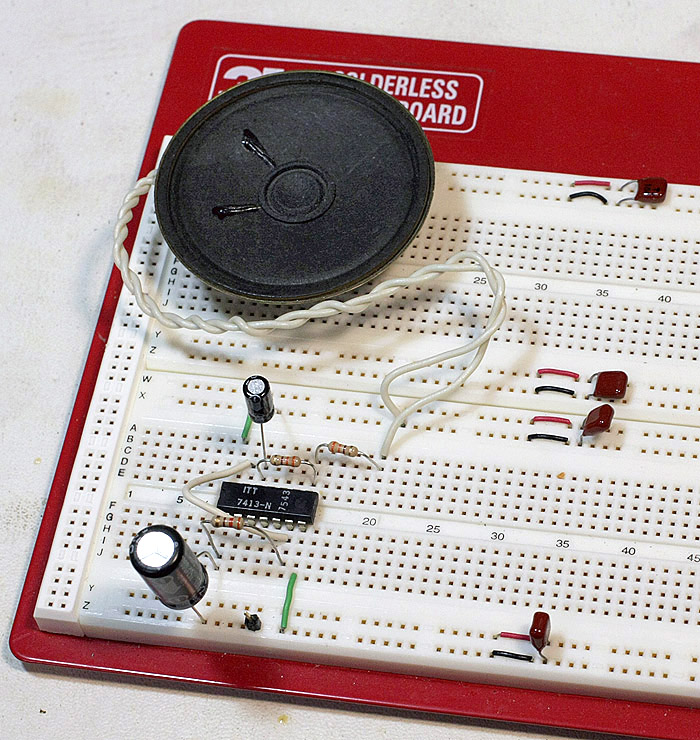

Here's what it looked like on the breadboard just now:

It makes a sound that was uncommon in 1978 but became very common afterward:

Beep beep beep beep beep beep....

When I heard it, I immediately said to myself, "This is what the 1980s are going to sound like.

This sound is going to be common because it is so easy to make."

Before the advent of cheap digital ICs, beep-beep-beep sounds were much less common, and when

they occurred, my recollection is that

they tended to have a slightly soft onset and decay, like a musical instrument.

Not any more! We have spent the rest of our lives being beeped at.

Update: I thought this was the most boring, idiosyncratic blog entry I had

ever written, but I was delighted to receive fan mail about it (on Facebook) from a famous

electronics writer. So there are at least two people in the world who understand it...

Permanent link to this entry

|

2019

July

20

|

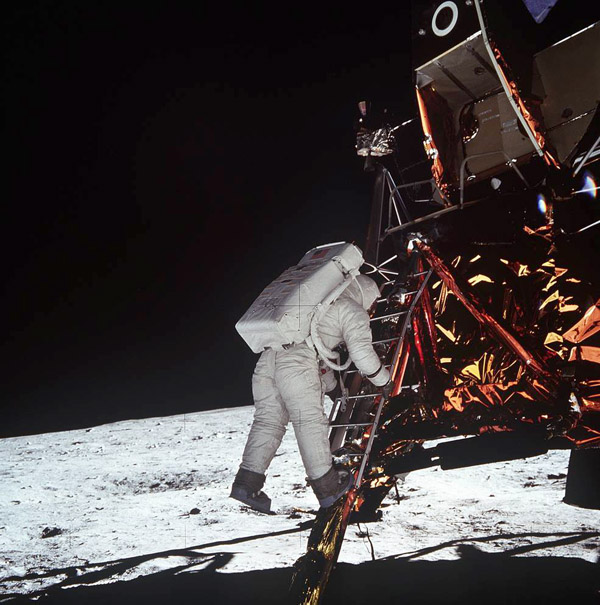

One giant leap for mankind

Did Apollo 11 change the world?

NASA

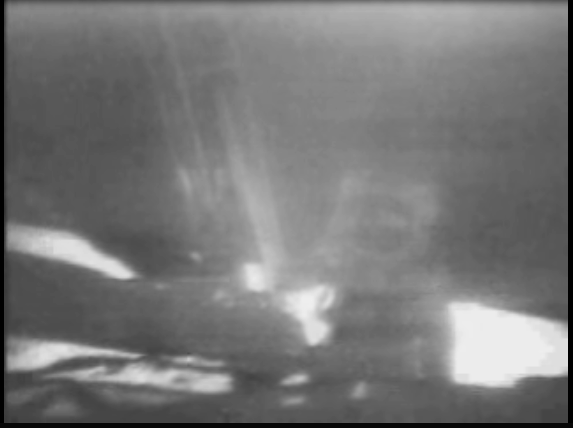

Fifty years ago today, almost everyone in the world who could watch TV saw the blurry

image above, and heard the words, "That's one small step fr'a man, one giant leap for

mankind." I was almost 12 years old and vacationing on Jekyll Island with my family

and cousins. We had been to Fort Frederica that day and were watching TV in the motel.

TV in the 1960s usually worked better than that, of course, and was often in color,

but the slow-scan transmitter

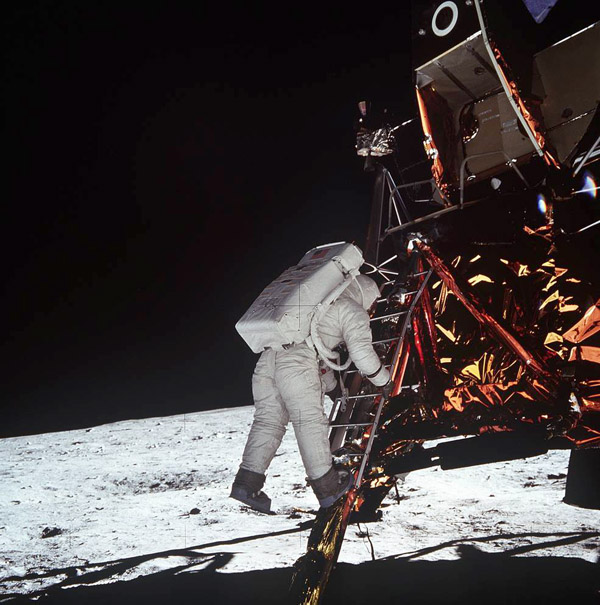

on the moon had serious technical limitations. Here is an Ektachrome slide taken by the

first astronaut (Armstrong) of the second astronaut (Aldrin) coming down, to show you what

that "one small step" actually looked like.

NASA

Looking back, I think the historical impact of the race to the moon was

completely different from what people thought it might be at the time.

Back then, there was much concern that Project Apollo spent money wastefully

(which is hard to deny; I'm told one of their slogans was, "waste anything but time")

and that it contributed nothing to solving problems on earth, such as poverty

and war.

It did not inaugurate the colonization of space. We made six more missions to the

moon and stopped. By 1974, the hot new thing in technology was not space flight

but microprocessors.

Did it start a new era at all? I think so, but indirectly.

The economic benefits of the space race are hard to quantify. At first they seemed

paltry compared to the cost. Yes, we got a handful of useful inventions, but the same

effort could have produced much more if expended differently.

At least, that's how we felt in the early 1970s.

Looking back from farther down the road, though, I see that many of the technological

spinoffs took longer to mature and were underappreciated at the time.

The biggest, I think, was that the space race raised

the educational level of the whole nation, maybe even the whole world, in a way

that has had a huge payoff.

Science became a much more valued part of a general education.

In the 1950s, you could get a college degree with surprisingly little science

and mathematics. By 1970, science wasn't just something you dabbled in for a couple

of years in high school; it was seen as an important part of human activity.

New technology was becoming available rapidly, and people wanted and needed

to understand it.

In fact, arguably, even the concern that we were neglecting problems on earth came

out of the global perspective and higher educational expectations that the space race

gave us.

People who don't think about anything more than thirty miles away — a common

mindset in the 1930s — don't care about the world's problems.

But when we saw the famous picture from Apollo 8,

it crystallized, for many of us, the concept that the earth is our home,

and the whole earth is our responsibility.

NASA

Permanent link to this entry

|

2019

July

16

|

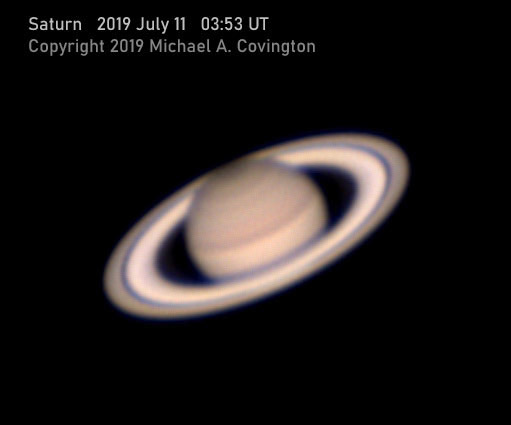

Planets

On the evening of July 10 I photographed both Jupiter and Saturn.

Celestron 8 EdgeHD, 3× converter, best 50% of a large number of video frames

(2889 for Jupiter, 1297 for Saturn) stacked and processed with AutoStakkert

and RegiStax.

Permanent link to this entry

|

2019

July

15

|

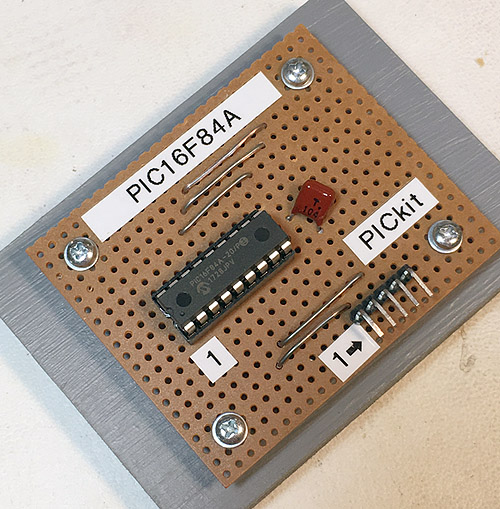

Alcor rides again

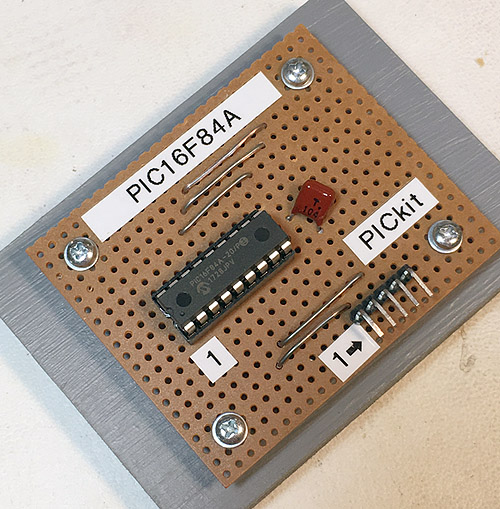

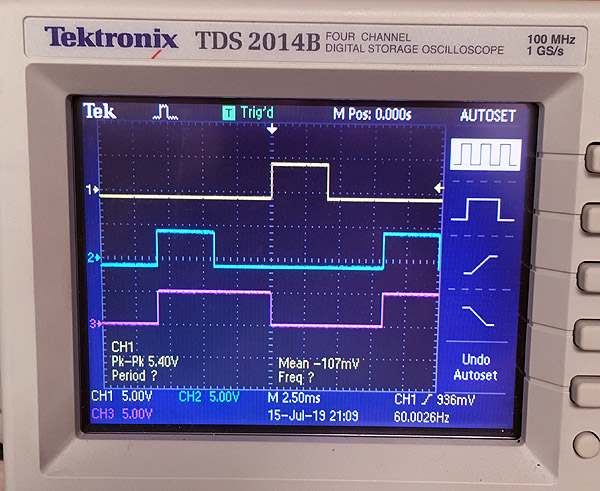

What got me back into microcontroller work was that someone asked me for help

with Alcor, a drive

controller for old-style telescope motors that I designed in (gasp!) about 1996

and released in final form (or so I thought) in 1999.

It felt strange to be working on an assembly-language program that I had last

edited more than 20 years earlier. It's probably the last assembly-language program

I'll ever work on, now that the price of a good C compiler has fallen to zero.

What I've done is add a version for motors that have special gears to produce

sidereal rate on 60.000 Hz; and revise Alcor's web page; and add another page

about how to

program an old-style PIC16F84A with modern ICSP programmers

such as the inexpensive PICkit.

Here are some scenes from the development and testing process.

I have quite a few more projects in mind.

Permanent link to this entry

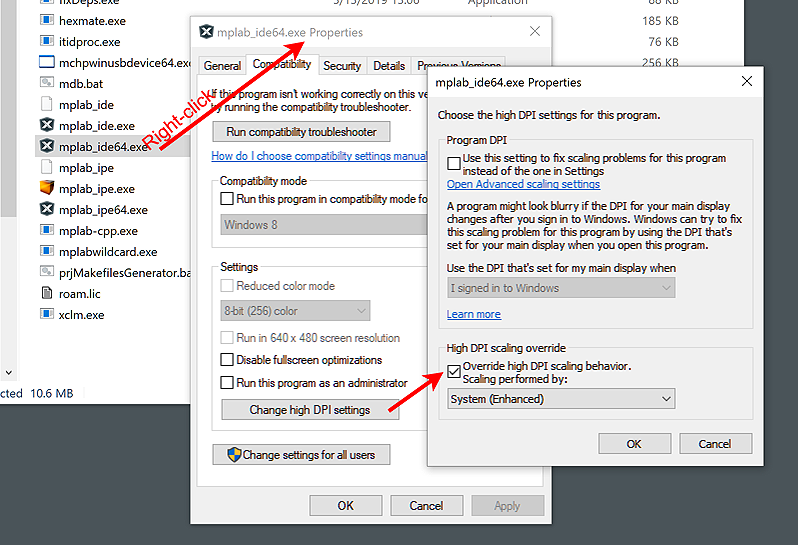

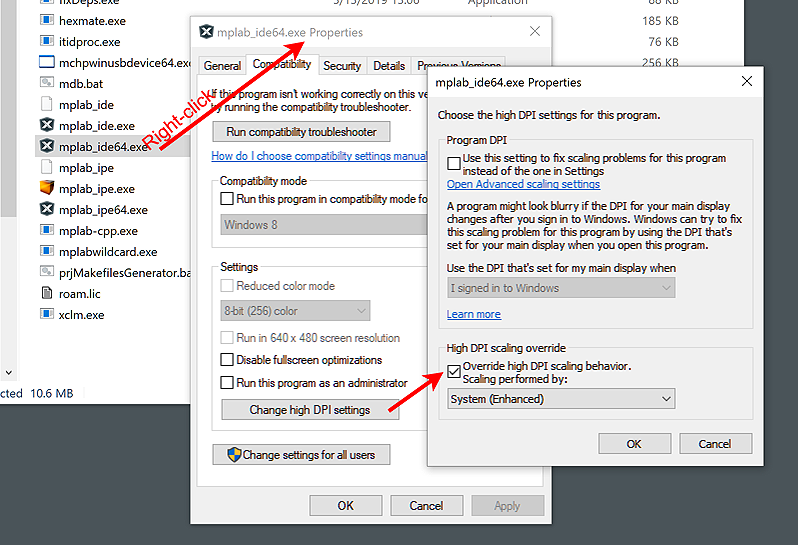

Software is tiny on a high-resolution screen

Known problem with MPLAB X among many others

While compiling microcontroller code on my PC, I ran into a problem that has also beset me

with older versions of Photoshop and several other products:

On my giant 4K screen, some of the windows (especially file dialogs) were tiny,

and others switched between tiny and normal size at inopportune moments.

The problem is as follows. Traditionally, computer screens had no more than about 120

pixels per inch, and software addressed the pixels individually.

But now we have high-resolution displays ("retina" displays as some call them)

with the pixels much closer together. Windows automatically displays the software

at the right size if it knows how. But occasionally it guesses wrong.

The cure is in the compatibility settings for the software. There are two ways to get to them:

-

Find the executable file (e.g., in "C:\Program Files" or "C:\Program Files (x86)"

and right-click on it and choose Properties.

-

Alternatively, right-click on the Start Menu icon for the software, choose More, Open File Location,

find the link to the software of interest, right-click on it, and choose Properties.

Now choose the Compatibility tab, click "Change high DPI settings," and choose "Override

high DPI scaling behavior," selecting "System (Enhanced)" in the box below it. At least,

that's what worked for me, with MPLAB X. You have several settings to play with.

Permanent link to this entry

|

2019

July

13

|

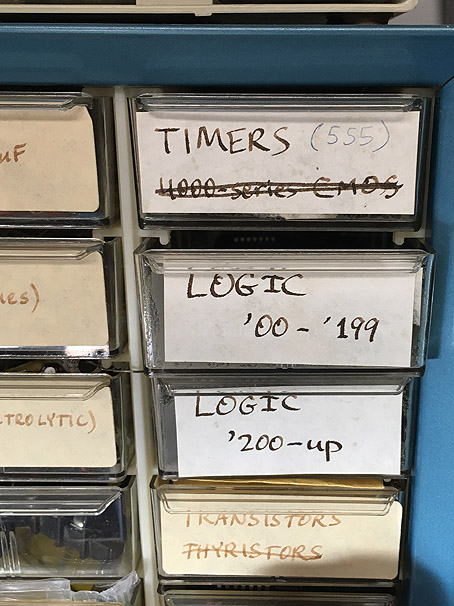

The second microprocessor revolution

There have been two microprocessor revolutions.

The first, in the 1970s and 1980s, was when microprocessors became available.

The second, today, is when they are cheaper than even small amounts of

other circuitry.

I'm not kidding. Nowadays, if all you want to do is make an LED flash, it can be

cheaper to do it with a low-end microcontroller

than with conventional ICs. The micro costs no more than a 555 or a dual op-amp.

It costs less than a pair of transistors and a handful of resistors and capacitors.

And you don't have to stock such a variety of parts — just program the micro

to do what you need.

A complete (but small) computer on a chip, with ROM and RAM and numerous i/o devices,

can cost as little as 35 cents in quantity; maybe as much as 70 cents singly.

So it's no surprise that almost all functions involving switching, logic, and timing

are being given to micros rather than conventional ICs.

I think that is a bigger change than the introduction of transistors (1950s)

or conventional ICs (1970s). Those just gave us more convenient forms of circuits that

had been invented (or nearly invented) in the tube era. Microprocessors give us

something new: software (called "firmware" when stored inside the chip itself).

Now you program your chips instead of just connecting them together.

And, sadly, the things we build aren't repairable by people who don't have the software.

You can't just buy new parts and put them in.

The cost of using a micro involves three things, and all three have plummeted:

- Cost of the chips themselves (now under 50 cents, down from $40 in the 1980s);

- Cost of the apparatus and software to program them (now often around $20, formerly hundreds);

- Effort required to develop the programs (now a few minutes of coding in C rather than days of

labor with quirky assembly language).

The last one may be the biggest change. The Arduino

came along and was both

affordable to buy, and very easy to program (no special apparatus, free software, C-like language).

That put pressure on manufacturers to make bare microcontrollers just as easy to use.

And they're doing it.

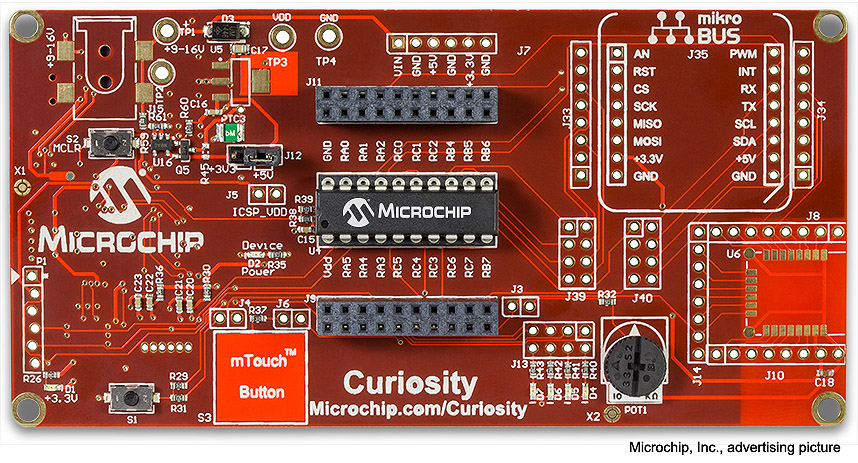

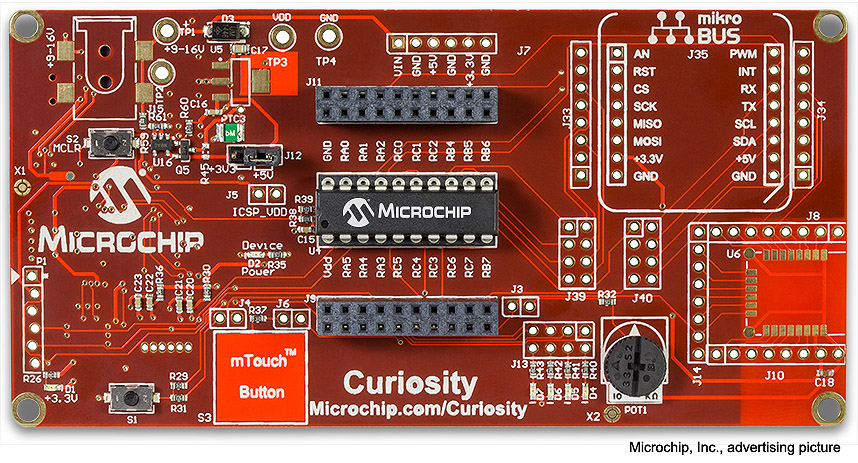

Consider for example the $20 "Curiosity" board shown above. I'm experimenting with one.

It's a programmer for new-style

PIC microcontrollers (the ones that all have the same pinout, except that part of the package is simply

left out of the smaller ones). It is also a prototype board — you can modify it and have it

actually be the chassis for the prototype of what you're building — ideal

for student projects. It has buttons and LEDs connected

to some of the pins, as well as connections for several kinds of add-ons, and there are lots of

removable 0-ohm surface-mount resistors so you can break connections and make changes.

With it, you get free programming and debugging software for your PC (including Linux) and

a good C compiler. What's more, the software includes a "code configurator" that generates C code

to set up the pin definitions, operating mode, etc., so you don't overlook anything.

Setup was actually the hardest part of using assembly language, because every micro had its own

requirements and it was so easy to overlook things.

The other thing the Arduino gave us was a community of users with shareable code.

Thorny programming problems have already been solved by other people.

If you want to interface to an LCD display or some other special device, find someone who

has already done it and shared the code. This saves a lot of work.

That idea has spread in two directions: Raspberry Pi for those

who need more computing power (still super-cheap), and, in the other direction,

easier use of bare microcontrollers,

in which Microchip, Inc., takes the lead.

So, in the foreseeable future, I'll use bare micros for the simplest tasks, Arduinos for moderately

complex ones, and Raspberry Pi for those that need power comparable to a small PC.

And I may be doing my last assembly language coding right now, updating a telescope drive controller

that I designed in 1999. More about that soon.

Permanent link to this entry

More about how electronics has changed

I said above that the microcontroller revolution, replacing wiring with software, is a bigger change

than simply making circuits smaller with transistors and ICs.

That's not the only thing that has changed in electronics since my youth.

I'm making a real effort to get caught up.

Around 2003-2006 I wrote part of an introductory electronics book, which has never been

published. I struggled with keeping it from being a 1980s nostalgia piece. I think it needs

another revamping before I do much more with it.

Already in the 1970s, early in the IC era, I noticed that the real divide is not between

hobbyists and professionals, but between one-off and mass-produced designs.

Before that, in the Heathkit era, there had been no divide, and hobbyists could (if they cared to)

build equipment that looked just like manufactured gear, inside and out. The decor of the panels

might be the only visible difference. But not now!

That divide has widened. The high-density surface-mount printed circuit boards used in manufactured

products are not cost-effective in quantity one. Instead, custom-builders

(hobbyist or pro) use ready-made prototype boards or breakout boards,

where a chip is already soldered

to a circuit board with the required support components already installed, and there's space for

adding more. In fact, all the most useful ICs (voltage converters, audio amplifiers, etc.), if they are available only

in surface-mount form, are being marketed on breakout boards by entrepreneurs in Asia. Instead of

buying a chip, you can easily buy a half-inch-wide circuit board to incorporate into your own design;

it may even ride on your circuit board as if it were an IC. (And technically it is an IC, hybrid,

not monolithic.)

For one who started following electronics in the 1960s, it's sad that we can no longer build things

the way the manufacturers do — but we can certainly get good results! The amount of functionality

that we can build into a small case is greater than ever.

The other big technical change I've seen is a move toward 3.3-volt and even lower supply voltages.

In the middle of my career, we always expected 5 volts for logic, 12 volts for power. Not any more.

Many devices use so little power that button-cell batteries can power them, delivering 2.7 volts.

This means I need to rethink my stock of parts.

As an educator, I have another concern. As everything moves into ICs and firmware, will people

still learn electronics? It's hard to think of diodes and transistors as important if you never

see them or make any decisions about them.

There's a connection between nostalgia for the discrete-component era and a serious interest

in how circuits work. (Just as many automotive enthusiasts are interested in older engines with little

or no electronic control.) Machines are more interesting if you can see how they work.

Permanent link to this entry

|

2019

July

10

|

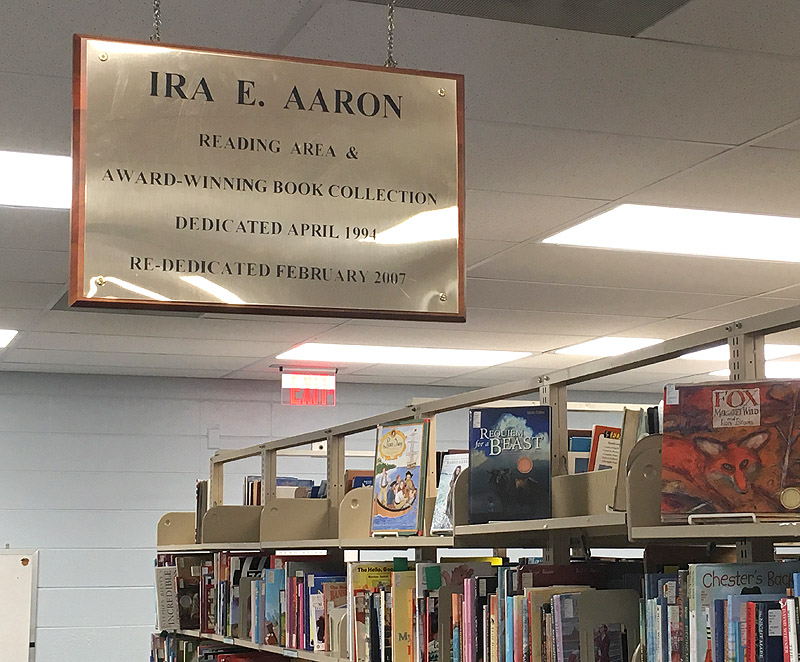

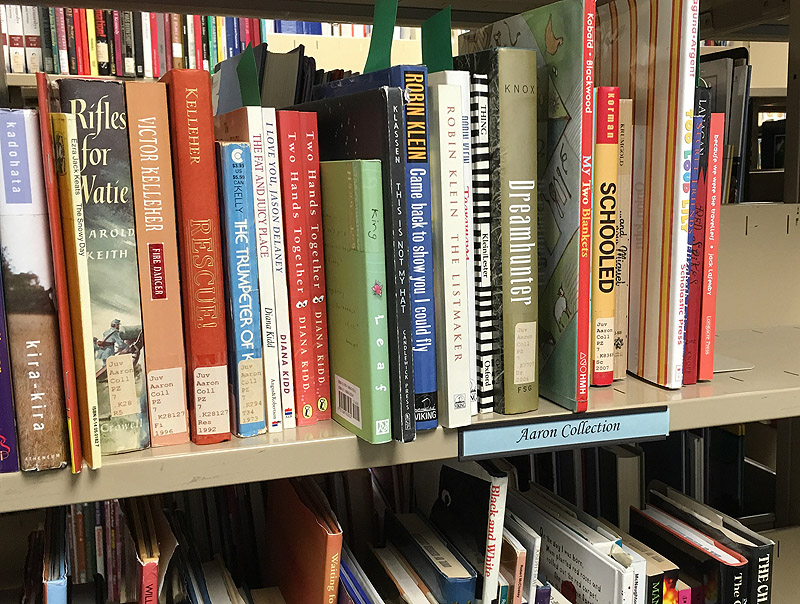

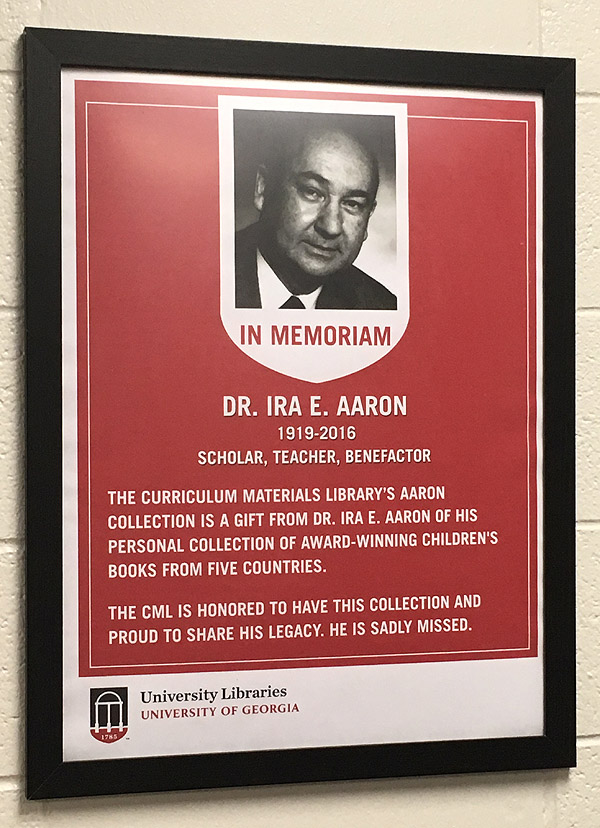

Ira Edward Aaron centennial

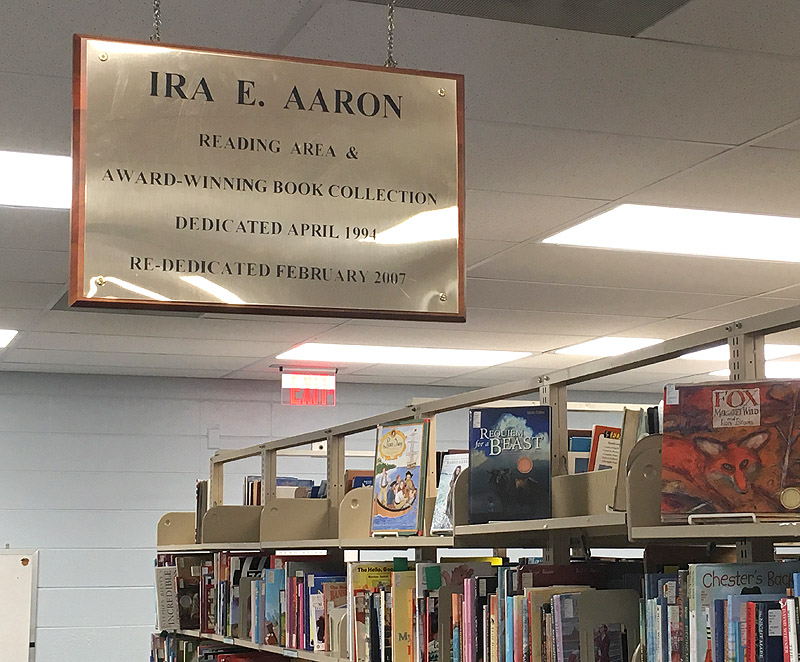

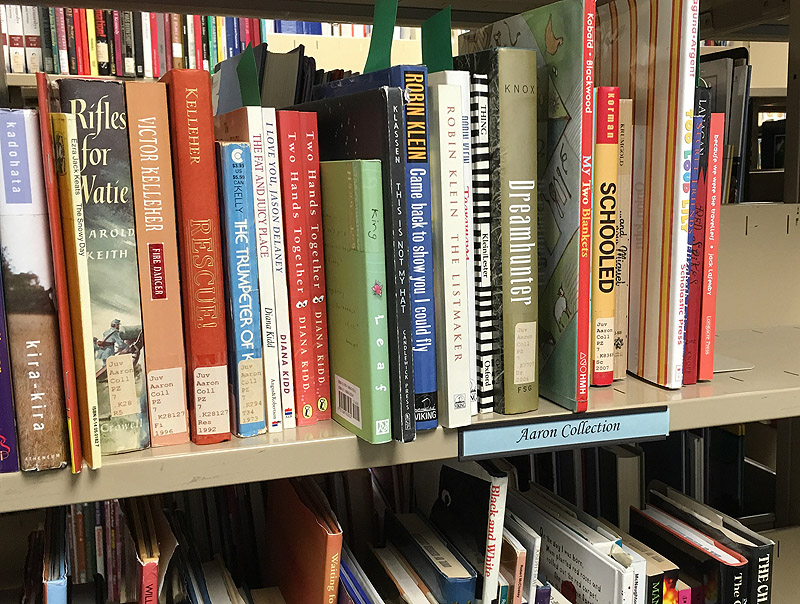

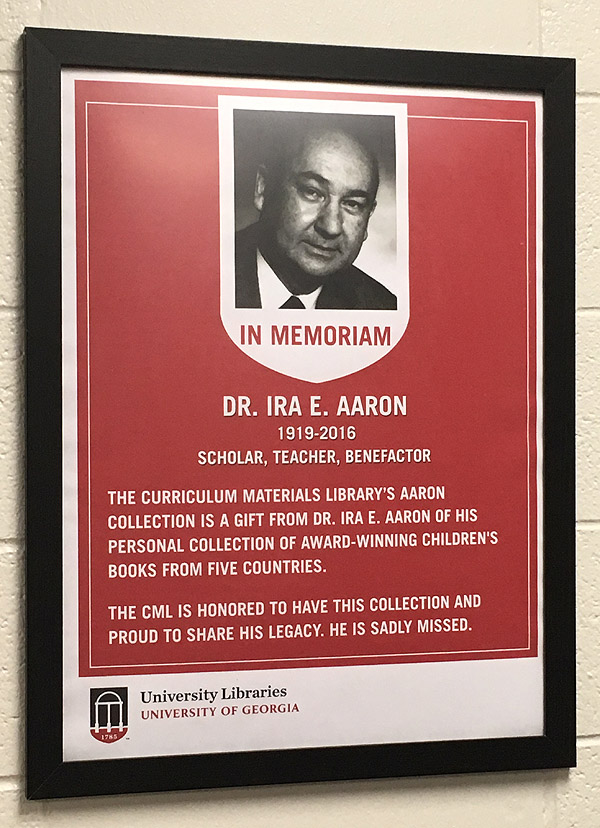

Yesterday (July 9) would have been the 100th birthday of my distinguished

great-uncle, Ira Edward Aaron, 1919-2016.

To mark the occasion, today I visited the University of Georgia's

education library, which is located one floor directly below where his

office used to be, and which has a reading area and a book collection

named in his honor.

Permanent link to this entry

|

2019

July

6

|

A really good lens: Sigma 105/2.8 DG EX

Back in 2005, for Father's Day, my family gave me a Sigma 105-mm f/2.8 DG EX (or EX DG)

telephoto lens for my Canons. Although billed as a macro lens (for close-up

photography), this lens also is very sharp at infinity, and I've taken a lot of

excellent astrophotos with it.

Now that I'm using two Nikon bodies extensively, this year I went looking for a lens like it

in Nikon mount. That was a difficult quest. I found out the hard way that the

Sigma 105-mm f/2.8 EX (not DG) lens is not as good. It has one fewer element and not

as flat a field. I also tested two Nikon 85-mm f/1.8 AF-D lenses from the 1970s and

found that they just aren't built to the standards of the digital era; digital cameras

demand about five times as much sharpness as film.

For quite a while, no 105/2.8 DG EX lenses in excellent condition were available on the

American used market. I eventually bought one from an

eBay camera dealer in Japan and was

startled that they got it here in three days, for about $20 in shipping. And I got a good

price on it, too.

Sigma doesn't make a tripod collar for this lens, but I have long used the collar for

a different lens (Sigma 70-200/2.8 APO) with some padding added. The collar covers up

the focusing scale, which you don't need to see anyhow:

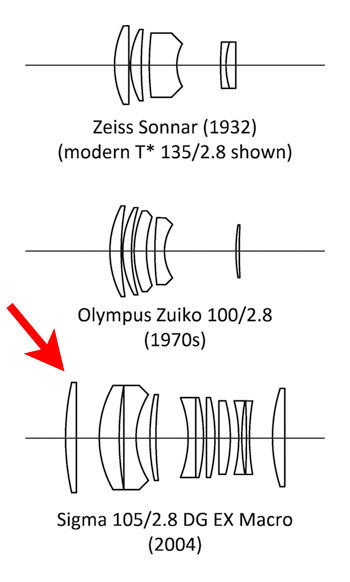

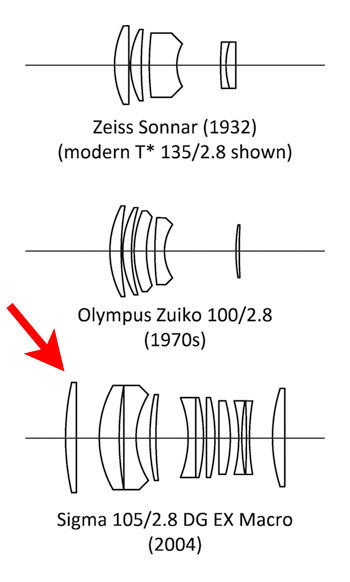

It is an 11-element lens derived from the classic Zeiss Sonnar. Here is an

optical diagram (from Digital SLR Astrophotography) showing this lens and

two of its distinguished ancestors:

I had to wait for good weather to do tests,

but I am glad to report that the lens is good.

Of course, on a modern 24-megapixel camera, it shows some aberrations at the

edges of the field at f/2.8, but less than most lenses.

At f/4, it is almost perfect, with just a small area of degradation at one

edge, probably due to slight decentration of an element, and only visible when

the image is enlarged so much that the whole picture would be several feet wide.

This is not the best Sigma can do. I am told that their

105-mm f/1.4 Art lens

was designed with

astrophotography in mind. It costs $1600, so for now, I'll stick with the

one I got used for $200. (The 105/2.8 also lives on as a DG OS lens, adding optical

image stabilization, around $500.) Sigma is positioning itself as a maker of

excellent lenses; in tests, Sigma products compete well against Canon, Nikon, and Zeiss.

Also, $1600 is not absurd when you consider what a decent telephoto lens

cost in 1970, and scale for inflation. We've gotten used to reasonably good

lenses that cost a lot less; but, inflation-scaled, really good lenses cost

no more than ever, and they are sharper than ever before.

Permanent link to this entry

NGC 6633 and star clouds

Here you see the star cluster NGC 6633, another cluster, and the edge of the

Ophiuchus Milky Way, with dark nebulosity at the bottom.

Here and elsewhere, the cross spikes on bright stars are largely due to diffraction

from crosshairs I added in front of the lens to help make the bright stars stand out.

Some diffraction spikes are also due to the lens's diaphragm.

This was taken as a test of how sharply well the 105/2.8 renders stars, and also

how well a PEC-corrected AVX mount will track the stars with this lens without

guiding corrections (answer: perfectly for at least 4 minutes). This is a stack

of eight 4-minute exposures, Sigma 105/2.8 at f/4, Nikon D5500 H-alpha modified,

ISO 200.

Permanent link to this entry

The Veil, through a veil, darkly

I've had the modified Nikon D5500 over two months and, until June 30, had not used its

superpower, its enhanced ability to see red nebulae due to the filter modification.

I was waiting for the earth's orbital motion to bring the Milky Way into the

evening sky, and also, often, waiting for good weather.

But here's an example, in poor weather (the sky was hazy). This the Veil Nebula

in Cygnus. This is a stack of four 4-minute exposures, Sigma 105/2.8 at f/4,

AVX mount, Nikon D5500 H-alpha modified, ISO 200.

You are looking at only the central part of the picture.

To compare how this nebula looked with an unmodified camera (under better conditions)

click here.

This nebula emits both blue and red light, and the other camera only picked up the blue.

Permanent link to this entry

The nebulae around Gamma Cygni

Still photographing through hazy air, I ended the session by taking a series of nine

4-minute exposures of the nebulae around Gamma Cygni. (Same setup and camera as above.)

You may recall that this field was what I looked at through binoculars

the very first time I observed the sky,

over 50 years ago, although of course I couldn't see nebulae, only stars.

It remains one of my favorite parts of the sky.

(When you click through, note that the other picture does not have north straight up,

but this one does.)

Permanent link to this entry

|

2019

July

2

|

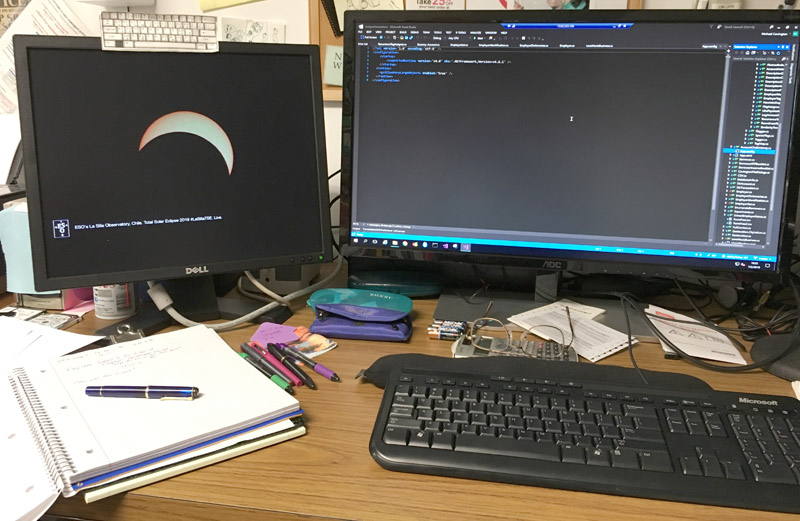

Eclipse, from a distance

I'm glad my friends in Chile had excellent weather for today's solar eclipse.

I didn't go (though I turned down an opportunity; I just wasn't sure I could get away).

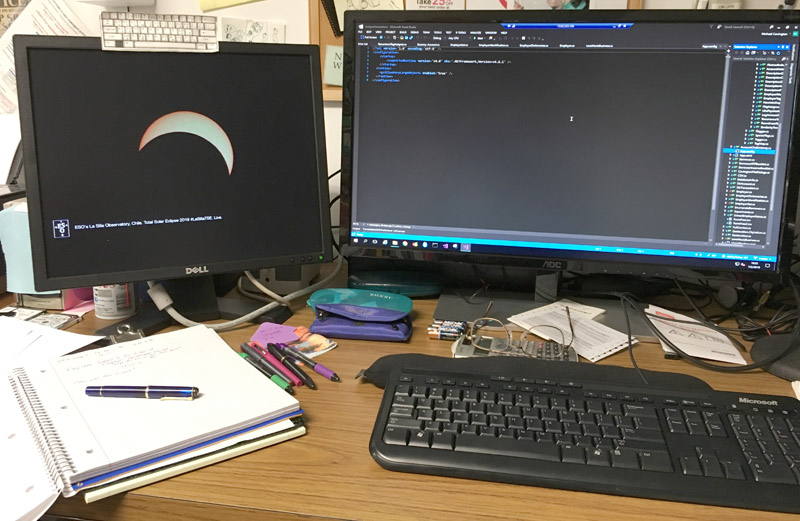

Instead, I used the Internet to view the European Southern Observatory's

live video feed while I worked...

Advantages of having a two-screen computer...

And I'd like to point out something. There were no sunspots today. And how often

do we get to see a solar eclipse on a day when there are no sunspots?

Because of low solar activity, the corona was unusually smooth and symmetrical,

not jutting out in random directions. Here's a video frame from the ESO:

Of course, more coronal structure will be visible in high-dynamic-range pictures that I have not yet seen.

But I think pictures from today will become the textbook example of an eclipse at a

time of low solar activity.

Permanent link to this entry

Jupiter is still at it

The Great Red Spot continues to shrink and spin off material.

This isn't a great picture, and none of this season's pictures are going to be

great because Jupiter is low in the sky; it's on the side of the solar system

that the earth's axis is tilted away from, at least as seen from the Northern

Hemisphere. My friends in the tropics and in Australia are having much better luck.

Stack of the best 50% of about 3000 video frames, C8 EdgeHD, 3× extender, ASI120MC-S camera,

sharpened with RegiStax.

Permanent link to this entry

|

|

|

This is a private web page,

not hosted or sponsored by the University of Georgia.

Copyright 2019 Michael A. Covington.

Caching by search engines is permitted.

To go to the latest entry every day, bookmark

http://www.covingtoninnovations.com/michael/blog/Default.asp

and if you get the previous month, tell your browser to refresh.

Portrait at top of page by Sharon Covington.

This web site has never collected personal information

and is not affected by GDPR.

Some older pages that contain Google Ads may use cookies to manage the rotation of ads.

No personal information is collected or stored by Covington Innovations, and never has been.

This web site is based and served entirely in the United States.

In compliance with U.S. FTC guidelines,

I am glad to point out that unless explicitly

indicated, I do not receive substantial payments, free merchandise, or other remuneration

for reviewing or mentioning products on this web site.

Any remuneration valued at more than about $10 will always be mentioned here,

and in any case my writing about products and dealers is always truthful.

Reviewed

products are usually things I purchased for my own use, or occasionally items

lent to me briefly by manufacturers and described as such.

I am an Amazon Associate, and almost all of my links to Amazon.com pay me a commission

if you make a purchase. This of course does not determine which items I recommend, since

I can get a commission on anything they sell.

|

|