|

|

|

| |

This web site has never collected personal information

and is not affected by GDPR.

Some older pages that contain Google Ads may use cookies to manage the rotation of ads.

No personal information is collected or stored by Covington Innovations, and never has been.

This web site is based and served entirely in the United States.

|

2018

May

30

|

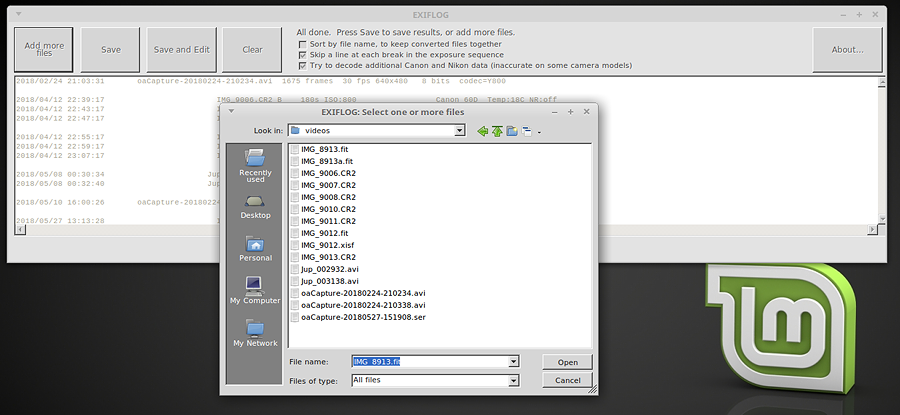

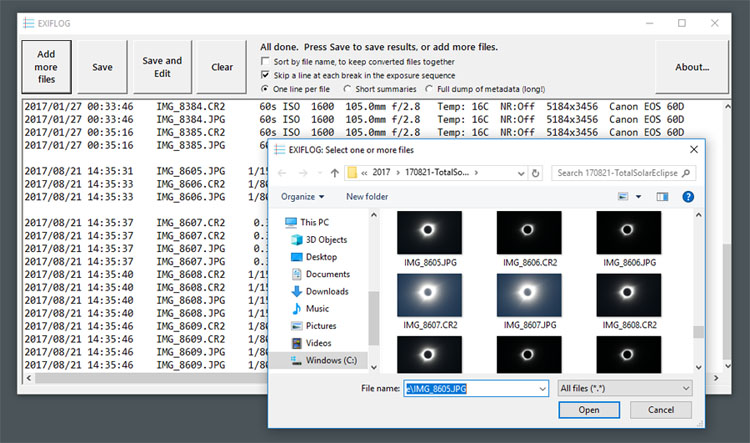

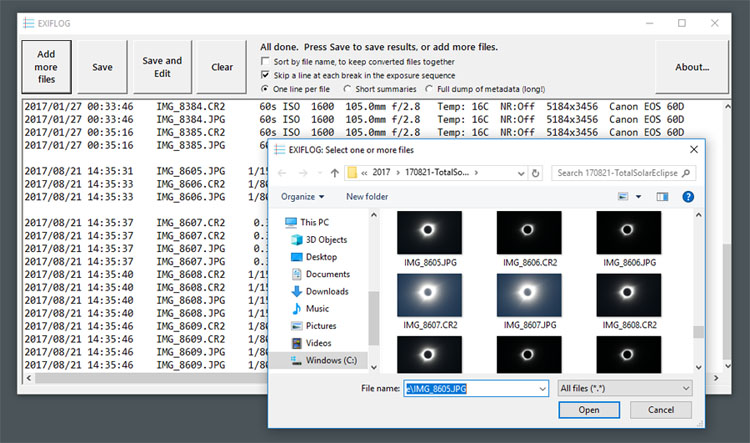

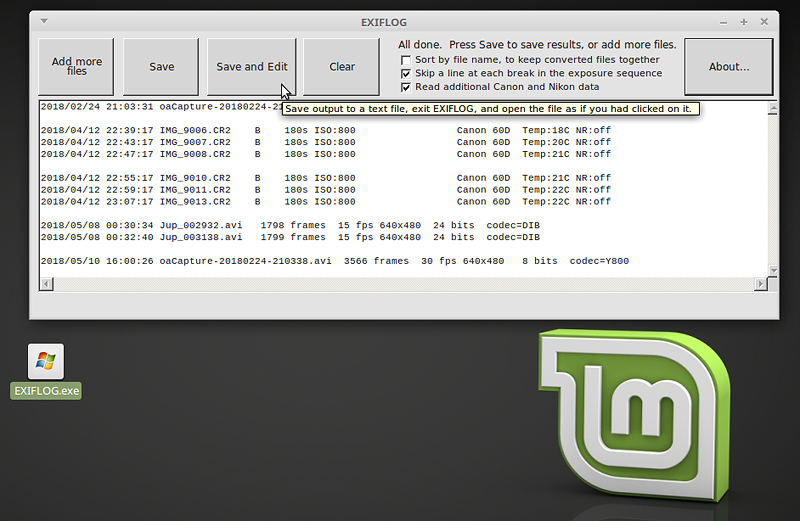

And EXIFLOG for Linux too (and macOS?)

I decided to resume development of EXIFLOG version 2, side-by-side with EXIFLOG version 3,

and have added FITS, XISF, and SER support to them both.

Here's why. Version 2:

- Is faster, though a bit less full-featured (there are a few cameras with which it does not work well,

and it won't do full dumps of metadata).

- Runs from a single .EXE file without installation (although you can also install it under Windows).

- Runs under Linux (and probably also macOS) using Mono.

Version 3 supports all digital cameras (at least, all known to ExifTool, which is

built into it) but is only for Windows and requires installation. Also, it's slower.

Linux compatibility is what's important to me; I am now doing all my video image acquisition

under Linux in order to dodge possible further changes in Windows.

Click on the picture above for more information. And I'd like to hear from anyone who

successfully runs EXIFLOG under macOS.

Permanent link to this entry

|

2018

May

28

|

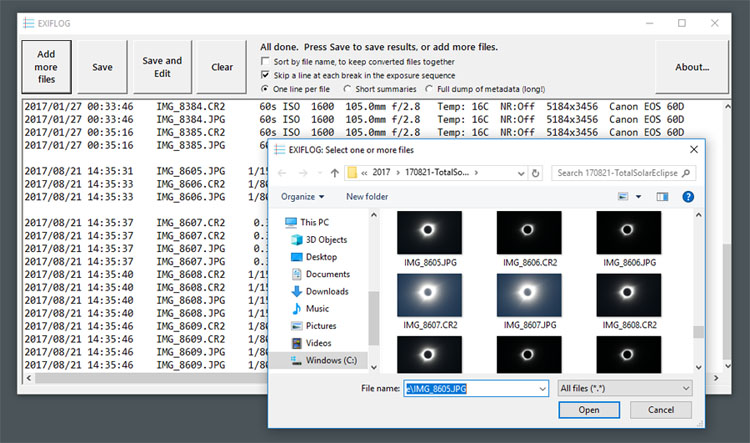

Another improvement to EXIFLOG

Two EXIFLOG version updates in the same month.

Two and a half weeks ago I rolled out version 3.2 of my photo-logging software,

to correct a bug, and now, meet version 3.3. (Click on the picture to get it. It's free.)

EXIFLOG reads a set of picture files and makes a text file that lists them with basic

exposure information. I use it to keep track of the dozens of files I create in every

astrophoto session. But you can use it in daytime photography too.

Now

I've added support for SER, XISF, and FITS files (but not MaxIm DL's proprietary compressed FITS,

which in my opinion should have been called by a different name, maybe CFITS).

There's more. I am going to resume development on version 2 (the version that can run under

Linux and macOS using Mono). The next release will be 2.6 and will include SER, XISF, and FITS

support. It will continue to be less complete and accurate in its support of camera files, but

still good enough for most purposes.

Permanent link to this entry

How an eccentric person rests

Yesterday, I was still quite exhausted from the trip to Kentucky and wanted a day off,

a day to get away from it all. So I sat down and spent several hours crafting major

improvements to EXIFLOG.

That may seem a strange thing to do. To me, it was like doing a craft or art project or

reading a very engaging detective story. I needed to get away from interruptions and

think about just one project for the whole day, and this project made that possible.

(I thank Melody and Sharon for letting me!)

I have a very long attention span, although I can "come up for air" and do other things that

are not too demanding while taking breaks. In this, I am apparently the opposite of many

if not most people. It seems that most people thrive on interruptions — get bored if

there are no interruptions — think nothing is going on if they are not being interrupted.

These are the ones who are happy that their smartphones go "ping!" every two minutes all day long,

proving that somebody cares about them (or their money).

Not me. I like to get off by myself and build things, fix things, or think things.

Permanent link to this entry

|

2018

May

27

(Extra)

|

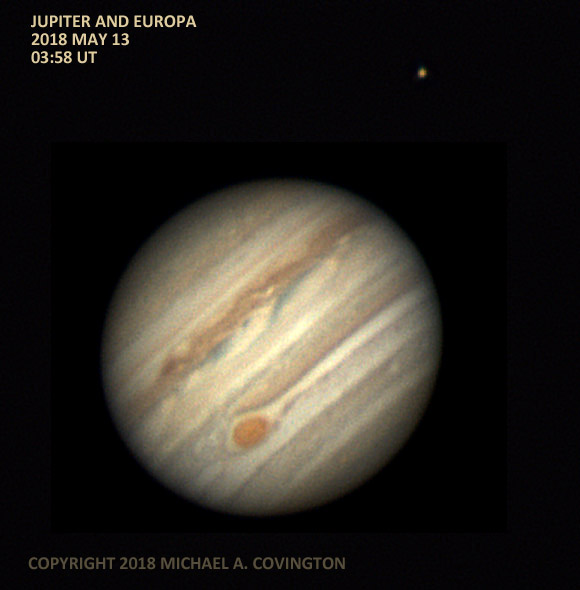

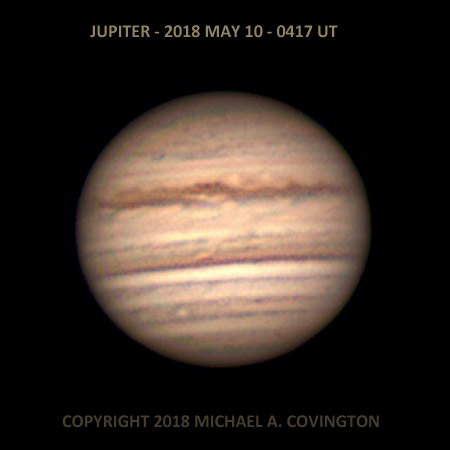

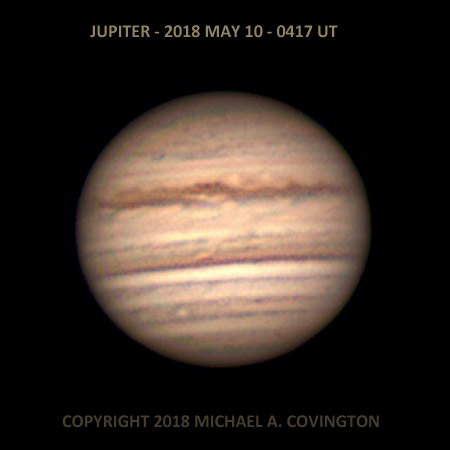

First light with the ASI120MC camera

We are in the middle of what is projected to be four weeks of daily rain. Nonetheless,

last night around midnight I discovered that the sky was (partly) clear.

Rushing to grab the Celestron 5, I was able to put the ASI120MC camera through its

paces for the first time.

Because it has "native support" rather than just video-camera drivers, the ASI120MC is

more versatile than the early-model DFK and DMK cameras I've been using. The main

differences I've noticed (with several software packages) are:

-

You're not confined to a standard frame rate; the number of frames per second is

automatically adjusted based on the video bandwidth and exposure time.

-

Full frames (1.2 megapixels) at full bit depth (12 bits encoded in 16) seldom go faster

than 9 frames per second. That is a reason for wanting the faster, USB 3 version of this

camera. [I should add that 9 fps, i.e. 1280×960×16 bits per second,

is only 1/3 of the

full USB 2 transfer rate. This is apparently a limitation due to emulating USB 2 on USB 3

hardware, combined with some quirk of ASI's drivers. I have had intermittent success with

turning off USB 3 in the BIOS and then getting as much as 30 FPS.]

-

12-bit (16-bit) video files are stored in SER format, which little or none of my software

knows much about. (It is an emerging standard, and AutoStakkert handles it just fine.

I need to add support for it to EXIFLOG.) (Done, in version 3.3.)

-

To speed things up, the camera can be switched to 8-bit format and only a specified region

(ROI, region of interest) can be used, so that you get the speed of a smaller camera.

In the narrow-field picture below, I did that, but stuck with 16-bit format.

I used the new Linux version of FireCapture on an Asus

laptop. The picture above is the best 75% of 764 frames, using the full 960×1280-pixel field,

slightly cropped and then downsampled. 5-inch Schmidt-Cassegrain telescope at f/10.

Permanent link to this entry

Mare Humorum

And here are the second and third pictures taken with the new camera, immediately after

the first one. They show a lunar "sea" (plain) called Mare Humorum. Like the picture

above, they are color images whose saturation has been increased to show the

subtle colors better.

First the full field (with some cropping due to image drift — I wasn't able to track

at lunar rate) and then an image made by using just part of the sensor in order to get

much faster downloads and a larger number of frames. Best 75% of about 900 and about

2200 frames respectively.

Permanent link to this entry

|

2018

May

27

|

Undocumented functions:

Matching wits with a Garmin nüvi 67LM GPS

[Updated.]

Regrettably, the

documentation

for my Garmin nüvi 67LM GPS is very poor.

It is little more than an incomplete checklist of what is on the computer menus.

To give you two examples of how bad the documentation is:

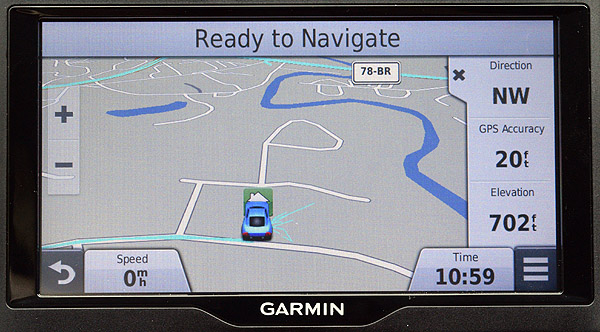

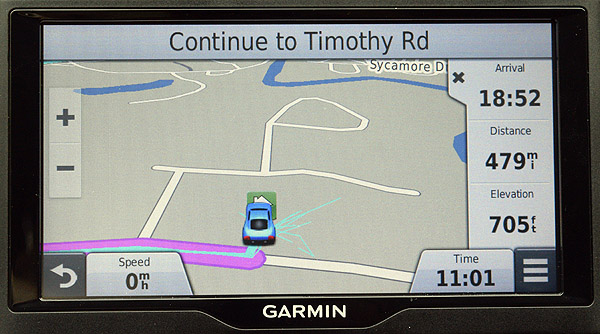

- The GPS can display, among other things, the arrival time, the direction of travel, and the elevation (altitude),

(interesting when we cross the mountains). This functionality is not mentioned in the instructions.

- The instructions use the word "shortcut" without defining it;

it turns out to mean "link added to a computer menu." The idea that "shortcut" might

mean something having to do with roads and routes seems to have eluded them.

I had to switch from a Garmin nüvi 50 to a Garmin nüvi 67LM to accommodate the backup

camera a few months ago, but we had not used it seriously for navigation until this week.

I found a lot of familiar functions from the 50 were missing on the 67LM.

Or were they?

I did some digging, and here is what I dug up. First a short summary:

Recommended practices:

- Build a library of saved via points that you will use to control the routes

of trips when the GPS might not otherwise choose the route you prefer.

- Use the Trip Planner app for routes that involve via points, especially

if they are likely to be reused.

- In Trip Planner, convert each via point into a shaping point so that it

will not be announced and you will not be compelled to visit it.

- Display time and location data at the right of the screen using Trip Data.

Preliminaries

The GPS operates in two modes, with and without a route (a destination). In what follows

it will be important to know whether, at any given moment, you have a destination or not.

By click I mean to press the touchscreen momentarily at a point.

By drag I mean to press the touchscreen and move your finger while pressing; this is the

usual way to move the map.

Controlling the route with via points

A via point is a point you are required to drive past (or over) but not stop at.

By choosing via points, you can control the route.

For example, a via point on a certain road will make the route use that road.

The 50 provides for via points; the 67LM does not. Or does it? Actually, there is a way.

What you do is select a point that is in, or very close to, the road and then

add it to the existing route as an additional destination. If it is actually in the road,

you will be allowed to drive over it without stopping. But if it's even a few hundred

feet off the road, you will be commanded to go back and visit it!

Caution: Although it is desirable for a via point to be in the middle of a road,

don't put it in one carriageway of a divided highway. If you do, the nüvi 67LM will assume you

want to be traveling in that direction when you pass that point and will make you backtrack strangely

when you want to go back home!

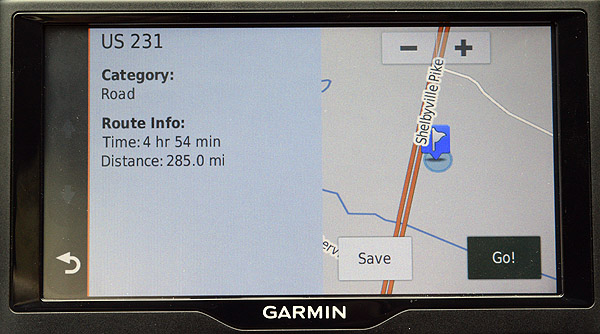

To select a point on a road, here's what to do:

(1) Click on the car symbol to make the map flat (not a perspective view).

Zoom the map out, using -,

enough that you can identify where you want to put the point.

(2) Drag the map to center the point you are interested in.

(3) Zoom the map in, using +, until you can see the road clearly enough

to see its width (to distinguish the road from points alongside it).

(4) Click the point. If your click comes up in slightly the wrong place, just click again.

(5) When you've chosen the right point, click on the bar at the bottom that gives the

name of the road and possibly the street address:

This will bring you to a screen of information about the point, like this:

(6) Recommended: Click Save to put this point in your list of saved places.

I give all mine names that

begin with "Via".

Then skip to (8) below and use Trip Planner.

Or, if you don't want to save the via point, and you are on a route,

you can add it to your route immediately.

To do that, click Go.

When you do,

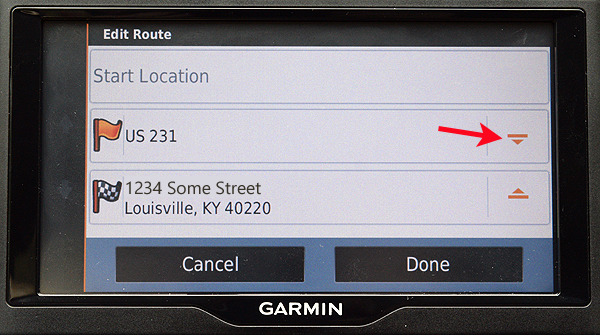

you'll see this:

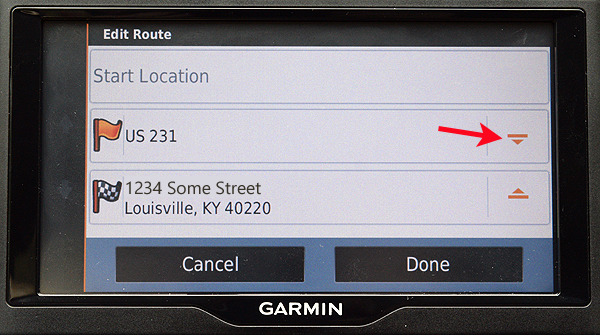

Make sure all the via points are in the right order, because you will be sent

to them in the order in which they appear! The red arrow indicates the icon to drag

to move a point up and down in the list.

(7) To add a saved via point to your route, go all the way back to the opening

screen, click "Where To?", call up the point from saved locations, add it as an

additional destination, and make sure it's in the right order as in the step above.

(8) Recommended: The Trip Planner "app" (under "Apps" on the

starting screen) lets you set up routes, edit them, and save and reuse them.

You can include via points.

It saves time if you add the via points and the destination to the trip in the

order in which they will be reached. Otherwise, make sure to keep track of

which one is to come last (the checkered flag) so that you aren't made to

backtrack. You can drag them to change order as in the picture above.

If there are at least 4 points, you can "Optimize Order," but this won't change

which one is last, so get that correct beforehand.

Trip Planner lets you convert via points into shaping points, which are via

points that are not announced as you drive over them and which you will not

be told to go back to if you miss them. In Trip Planner's Edit Destinations screen,

click the orange flag on a via point to do this.

(See this

and this

and especially this.)

Garmin's lack of documentation is regrettable.

I found this web page

helpful.

Many such pages refer to Garmin support pages that no longer exist!

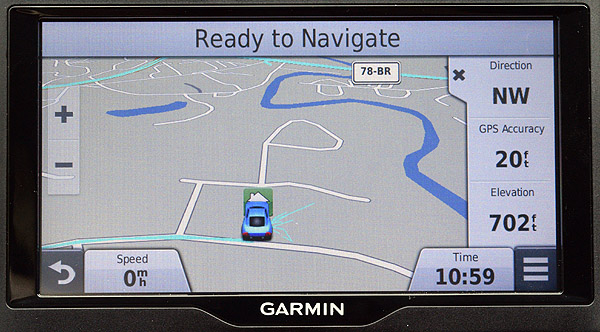

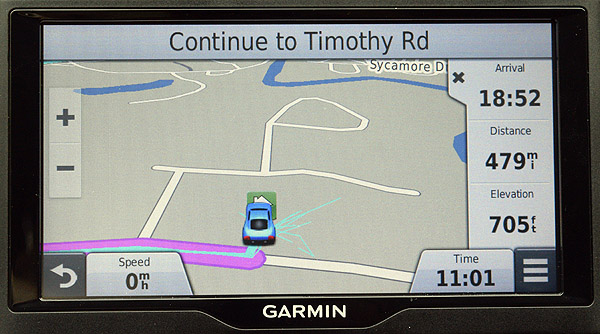

Displaying arrival time, elevation, and other data

My nüvi 50 had a column of numbers at the right-hand edge of the screen which

was missing on my nüvi 67LM. Here you see them after I brought them back,

displaying direction, GPS accuracy, and elevation:

And here's how they look when I have a destination. They show arrival time

(separate from the time of day at the bottom),

destination distance, and elevation:

Here's how to get them to appear:

(1) On the start screen (the screen that displays "Where To?"), choose Settings,

Map Tools, make sure Trip Data is checked, and Save.

(2) With the map on the screen, click the triple bar (≡).

(3) Click "Trip Data." This makes the column of data appear.

You can make it disappear by clicking the X on it, if you need more room for the map later on.

(4) Click on each of the three data items and choose which data item should appear there.

That is how I get time, distance, etc., rather than odometers.

(5) Do step (4) again. You have to do it once with a destination and once without a destination

in order to control the data displayed both ways.

Afterthought:

I think the nüvi 67LM was brought to market with unfinished firmware as well as

unfinished documentation.

Too much is simply not there or not consistent.

And the nüvi 67LM stayed on the market only a relatively short time.

It has been superseded by the Garmin Drive product line.

Permanent link to this entry

|

2018

May

26

|

Tragic school shootings: What should be done?

Our civilization has sunk to the point that we are now having a school shooting every

few days. All the things I used to say about handling rare events no longer apply.

School shootings have become part of the culture, a thing to do, almost a

teenage fad. It reminds me of how, for a short time around 1970-72, hijacking airplanes

was a thing to do, and a motley assortment of troubled people did it.

Some observations:

(1) If you use this purely to debate gun control, on either side, you're not helping.

This is more than just a gun control issue.

Without reaching a consensus on gun control, we can still address other concerns:

- why it was so easy to get into the school building with a gun;

- the troubled shooters and the way other people treated them (often very badly!);

- the mental illnesses of school shooters and the treatment they

were receiving for it, or not receiving; even the effects of medications;

- the fact that the last several shooters have been captured alive and

we can find out what was going on in their minds.

Let's not turn this solely into a gun control debate.

There are a lot more angles from which the problem needs to be approached.

And more to the point, in the short run, gun control won't help, because the guns are

already out there.

Any benefit from gun control will take some time to show up.

But please don't read the following just to classify me as "pro" or "anti."

If that's the way your mind works, you're part of the problem.

I'm not toeing the NRA's line here, nor am I coming to take your gun.

Open your mind and think about the real problem, not just one narrow issue.

(2) This is crime, not just mental illness. Up to few weeks ago, all the shooters

seemed to be genuinely deranged. Lately, they are just angry young men with grievances,

and shooting up crowds at schools is what they do.

I thought the crime would be deterred when shooters started being captured alive and jailed.

That doesn't seem to be working yet. I hope it will. Meanwhile, let's classify the

problem as crime prevention and detection, not just psychology.

Many of the shooters have sent clear signals that they were going to turn violent.

We may need more publicity about the fact that the shooters are in jail.

Lots of shooters were willing to die in a blaze of glory but are deterred by the

prospect of a long, slow trial process followed by imprisonment.

What can we do to end the series of school shootings now?

Analyst Malcolm Gladwell has likened them to a slowly unfolding riot, in which

each violent act makes the next one easier and more likely. It must stop.

I think that, despite the cost and awkwardness, almost the only thing that will work

in the short term is to make these crimes physically much harder to commit.

Harden the targets.

Make it harder to get into a school with a gun.

Yes, a heavily guarded school is unfriendly; it would be better if it were not

necessary; but right now, the crimes must stop.

We also need criminal penalties and quick prosecution of parents who make

guns available to youngsters who commit crimes. Hold the gun owner responsible

for what is done with the gun unless it is actually stolen by breaking locks or

the like. Maybe Dad is more mature than Junior and can put some obstacles in the way

of this new form of juvenile delinquency. If I had a gun and a son, I would explain

it very simply: "I keep this gun locked up when I'm not personally present, because

no matter what happens to it or who takes it, I don't want you to be a suspect."

(3) Let's admit that high school can be a miserable experience. And not just

for the students whose mental health has actually broken down.

We need to care about the whole psychological and moral environment.

Moral as well as psychological. It is vital to bring up children in an

environment where they learn how to tell right from wrong.

Does the school have a culture that tolerates bullying or

encourages the notion that some people are worthless?

Are some students made to feel unwanted (even by teachers)?

Is it traditional to make impossible demands of people, or put them in impossible situations

with petty but frustrating things, such as rules or time limits that can't actually be fulfilled,

and then berate the people who complain or who break down?

Are people forbidden to point out ways the school could be better,

and told they don't have "school spirit" when they point out a shortcoming?

Does the school itself respect the basic human dignity of every student?

Or does its culture revolve around picking out a few superior

people (e.g., varsity athletes) and trampling on the rest?

Some school traditions are built around vicious elitism.

Does the disciplinary system reflect a genuine concern for fairness, or have

teachers and administrators taken refuge in "zero tolerance," where people are

punished for circumstances rather than willful acts?

Is the school simply too big and impersonal?

(4) We are going to have to address the fact that the shooters are

white males from gun-owning families.

Gun culture and machismo do play a role.

Other miserable and bullied students, such as ethnic minorities, never

turn into shooters. It's a white male thing.

I am convinced that, irrespective of what actual new limits are placed on guns,

American gun culture must change.

You may be for or against gun control, but, if you are worthy to be

my fellow citizen, you must be against gun violence.

If your head is full of fantasies about gun battles;

if you despise your fellow citizens who are a different color, religion, or political inclination;

if you taunt your neighbors with ΜΟΛΩΝ ΛΑΒΕ stickers and act as if your gun entitles

you to be mildly hostile to everyone,

then you are part of the problem;

you are the kind of person responsible gun owners

should shun.

Permanent link to this entry

|

2018

May

25

|

A grand visit!

Melody and I have just returned, exhausted but happy, from a road trip to Louisville, Kentucky,

to visit Cathy and Nathaniel and all four grandchildren.

Here you see only three, Benjamin, Philip, and Mary, piled on top of Melody;

we think Emily was actually also in the pile but hidden from view.

I still have some athletic ability left — I can wrestle a 3-year-old grandson and

win — some of the time!

Permanent link to this entry

|

2018

May

18

|

New planetary video camera and some software notes

ZWO ASI120MC under Windows and Linux

I'm about to change my lineup of astronomical video cameras, and

the first change arrived today.

Until now, I've been using

ImagingSource DFK and DMK 21AU04 cameras

like the blue one above.

They're built like tanks, but they're about 8 years old — only 640×480

pixels and only 8 bits per pixel.

Indeed, some newer software is having trouble supporting them because they're a bit

behind the wave of technology. I emphasize that ImagingSource is an excellent

manufacturer, and the cameras they make today give much higher performance.

They cater for industrial computer vision as well as astronomy.

The newcomer is a

ZWO ASI120MC like the one in red above,

from China.

I bought it used; it is still not the latest thing, but it's a big step up,

1280×960 pixels, 12 bits per pixel.

ZWO builds low-priced cameras with excellent sensors for amateur astronomy.

It comes with a tube for a telescope eyepiece tube like the DFK, but also with

a wide-angle lens (shown in the picture) for daytime experiments and all-sky weather or meteor observing.

This is still not the latest thing; my plan is to have a

ZWO

ASI120MM-S (the faster USB 3.0 monochrome version of this camera)

for autoguiding and possibly the faster version of the color one as well.

But in the meantime I want to share some notes about the ASI120MC, which is a very popular

camera with amateur astronomers right now.

ASI120MC under Windows

Crucially, you need to install two driver packages,

the "native drivers" and the "DirectShow drivers." These enable Windows software to see

the camera two ways, as an imaging device and as a video camera.

If you use ASCOM, you may also need the ASCOM drivers on the same page.

For image capturing software, skip ASICAP (which is offered to you on that same page)

and use SharpCap instead, an excellent piece of freeware

supported by ZWO.

ZWO says that FireCapture only supports the ASI120MC in version 2.6 (and up).

That squares with my experience, and even then, I had problems with deBayering.

I'm a little puzzled because many earlier versions of FireCapture show the ZWO ASI camera

interface as one of the options.

Maybe older drivers were different.

[Update: This needs further investigating and I will post updates.]

PHD2, the autoguiding software,

supports the ZWO ASI natively. It can probably also see it as a video camera,

and also through ASCOM, but I haven't tried those ways of accessing it.

Of course I don't plan to autoguide with a color camera, but you can.

There is even a USB-to-ST4 converter inside the camera, with an ST4 connector

on the camera body.

Quickly trying other software, I found that Metaguide sees the ASI120MC as a video

camera (DirectShow), and PEMPRO 2 does not see it. My impression was that PEMPRO 3 does,

but I was not able to test extensively because my free trial had run out.

ASI120MC under Linux

In what follows I am indebted to

Nicola Mackin's very

helpful web site and in particular her AstroDMx Capture software.

Just like me, she started out with early model DMK and DFK cameras and matched

wits with their quirks before moving up to an ASI camera.

To get the ASI120MC or ASI120MM (not -S) to work under Linux, you have to reflash the

firmware. When you do, according to Nicola Mackin, you may have problems with long

exposures (over 1 second) at 16-bit depth. But I did it,

and PHD2 (both Windows and Linux) has no trouble with 5-second exposures

(maybe it uses 8-bit depth).

The firmware updater (which runs under Windows) is on the

downloads page under "For Developers".

Flash the ASI120MC with the "compatible" ASI120MC file rather than the one whose name

does not contain that word.

Results? After flashing the firmware, I find the camera supported natively

and working well with oaCapture, Astro DMx Capture, and PHD2 under Linux.

[Update: It also works well with the new Linux version of FireCapture,

and if you have installed oaCapture, you do not need FireCapture's asi.rules file.]

Before flashing the firmware, the symptom was that the software saw the camera and

could connect to it but could not download any frames.

The newer ASI120MC-S and ASI120MM-S do not have this problem and do not need reflashing.

Permanent link to this entry

|

2018

May

14

(Extra)

|

What limits the sharpness of my telescope?

Why do some of my Jupiter pictures show more detail than others?

Why are none of them as good as pictures from the Hubble Space Telescope?

More generally, what limits my ability to see and photograph fine detail

on celestial objects? Why are the images always just a bit blurred?

Not magnification. Don't I just need a "more powerful" telescope?

No. Visually, I usually use it at 100 to 300 power.

That shows me all the detail the telescope picks up.

I could get higher magnification by changing eyepieces, but all I'd see is

the same image, bigger and dimmer but with no additional detail.

Cheap toy telescopes can give you "600 power" but it's a very blurry image.

No; the limits come from three other things.

(1) Optical quality.

Not actually a factor in my case; for 150 years, people have known how to build

telescopes good enough that the limiting factor is always something else.

But not all telescopes have first-rate optics.

There was a minor scandal because mass-produced telescopes in the 1980s,

during the build-up to Halley's Comet, often had inferior optics.

Standards were higher before then (anything from about 1960 is likely to be

very good) and are higher again now.

One optical issue I do have to keep up with is collimation, lining up the

mirrors and lenses so the light goes straight through the center.

This is an adjustment I touch up periodically.

(2) The wavelength of light.

Whenever light passes through a small opening, the light waves are disrupted

at the edges. You can demonstrate this for yourself by looking through tiny holes

in a piece of aluminum foil.

Make holes from about 1/4 inch down to the smallest you can make with a pin or needle.

You can see just fine through many of the holes; maybe even better than with your

unaided eye, because you're using a smaller, smoother section of the lens of your eye.

But at some point, as you move to smaller and smaller holes, the view will become not

only dim but also blurry. Diffraction is taking its toll.

Now you might think that the 8-inch-diameter front lens of my telescope is not a "small opening"

and wouldn't have this effect.

But it does when the image is magnified enough.

If there were no other limitations, I might get pictures of Jupiter twice as sharp as

the best ones I've posted here, but not ten times as sharp.

(3) The unsteadiness of the air.

If you look at a planet through a telescope at 200×, you'll think you're looking

at something on the bottom of a swimming pool.

What's this constantly moving stuff, like water, that we're looking through?

It's the air, which is constantly turbulent.

We have to look through the turbulence in order to see anything else.

Astronomers call the steadiness of the air the "seeing."

It's better at some times and places than others; better in mild weather than

after a sudden change; better over grass than over concrete; best of all if

you're looking off the edge of a high cliff.

That is why my results vary so much from night to night.

It is also why, until recently, serious planet observers, using their eyes and making

drawings, could record more detail than anyone could photograph.

Planet observing remained a visual, not photographic, practice at least

through the 1980s. Good observers would simply stare steadily, waiting for moments

of steadiness, and take note of the details that suddenly became visible for a moment.

Now video astronomy gets around this.

What I do is record about 2 minutes of video — 30 pictures per second — and

use software to align and stack the best of them.

The original idea was that the software would select the few sharpest images from brief

moments of steady air. But we all quickly found out that we got better results if we

used the best 50% or the best 75%, not the best 5% or 2% as visual astronomers might have

guessed.

The reason? Many random blurs, combined, become a Gaussian blur, which is a mathematical

function that can be undone by computation. If you combine a large number of randomly blurred

images, you can assume that the light had an equal chance of being deflected in each direction

each time (with a distribution of probable distances), and there is a simple algorithm to undo

the blur. That's our super-power these days.

How to do better? If I had a large budget and

wanted better planet images, I'd probably get a camera

that is more sensitive to light (so I could take more well-exposed video frames per second)

and a larger telescope (up to 14 or 20 inches in diameter).

I might even move to a site with steadier air, if I could find one.

But enormous telescopes don't give sharper images.

They do pick up more light from faint objects, which is why observatories build them

bigger and bigger.

But if you want planetary detail, you're facing a compromise; the bigger the telescope,

the more air turbulence it has to look through.

Depending on how steady the air is, the sharpest images come from telescopes about 20,

14, 10, 8, or even 5 inches in diameter.

My 5-inch telescope performs about the same all the time; my 8-inch definitely benefits

from "good seeing;" and anything larger would be even more at the mercy of the atmosphere.

A large telescope can give a worse image than a small one if the air isn't steady enough.

Permanent link to this entry

|

2018

May

14

|

Jupiter and Ganymede

When I set up the telescope last night, I noticed that one of Jupiter's satellites

was casting its shadow on the planet. Although the air wasn't as steady as it has been

recently, I managed to get a picture of this striking sight.

Now the spell of clear weather is ending — indeed, the sky was becoming hazy as

I took this picture — and I'll be doing something other than astrophotography in

my (not copious) spare time for a few days.

Same setup as last night; best 50% of about 3600 video frames.

Permanent link to this entry

|

2018

May

13

|

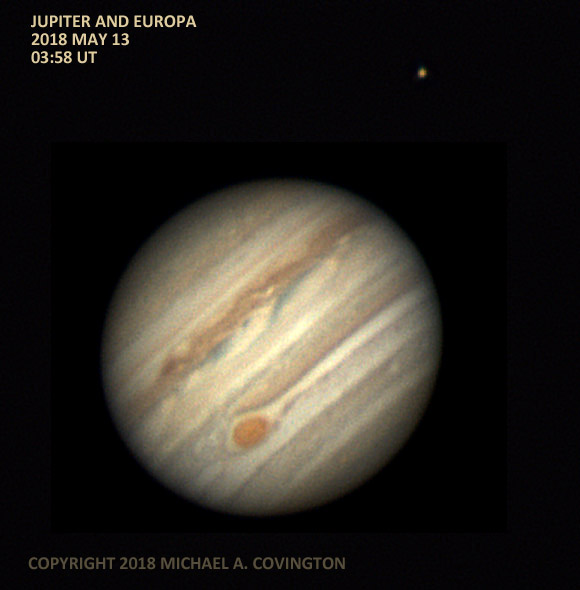

Jupiter with the Great Red Spot and Europa

Last night, things came together: exceptionally steady air, the Great

Red Spot turned toward us, and one of the satellites of Jupiter close enough

to be in the picture.

Here is the same picture processed with higher contrast:

That looks a bit less like the real Jupiter but brings out more detail.

Same setup as the previous night; best 80% of about 3500 video frames.

I discovered that AVI files created by oaCapture and processed by

AutoStakkert 2 come out mirror-imaged.

Accordingly, I corrected several recently posted pictures

(the corrected ones, below, include the date and time).

I suspect oaCapture is doing something slightly unconventional with the

AVI file format because many video players will not play the files at all,

nor does the current version of AutoStakkert 3 accept them, though the

next version is going to.

Running the files through PIPP eliminates both problems.

That was my usual practice until just recently, which is why only a

grand total of 4 pictures were affected.

[Update:] I've been in touch with the developer of AutoStakkert.

A future version, probably 3.1, will handle oaCapture files correctly.

Permanent link to this entry

|

2018

May

12

|

Jupiter

Jupiter at 12:09 a.m. EDT (04:09 UT) on May 12.

Same 8-inch telescope as before, 3× rather than 2× extender,

about 3500 video frames recorded with oaCapture. Setting the telescope up

on the grass rather than the concrete definitely helps, although the image

can still shift about 20 arc-seconds when I step close to a tripod leg.

I discovered that if oaCapture is in 2 windows and they are closed in the wrong

order, it is possible to leave oaCapture still running with no windows on the

screen. (I haven't experimented to pin this down yet.) Then, when you start

oaCapture again, the new one can't connect to the camera.

More news soon.

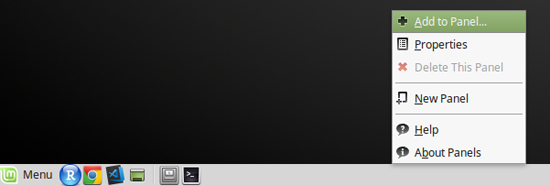

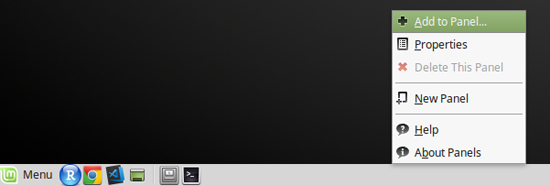

[Update:]

What had happened is that: (1) Linux Mint was set not to show the window list on the taskbar,

so minimized programs were completely invisible; (2) I had minimized oaCapture rather

than closing it. I could have gotten back to it with Alt-Tab.

What I recommend is, right-click on a blank area of the taskbar

(which in Linux is called the panel) and choose "Add to panel"

and "Window List." Then you'll always see your minimized apps.

Permanent link to this entry

|

2018

May

11

(Extra)

|

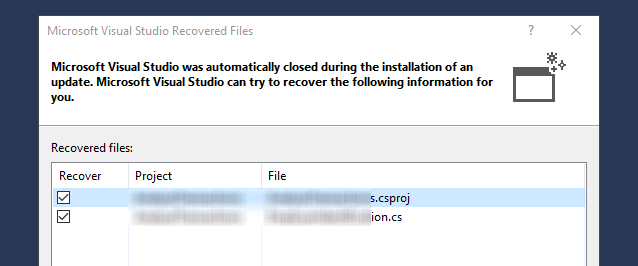

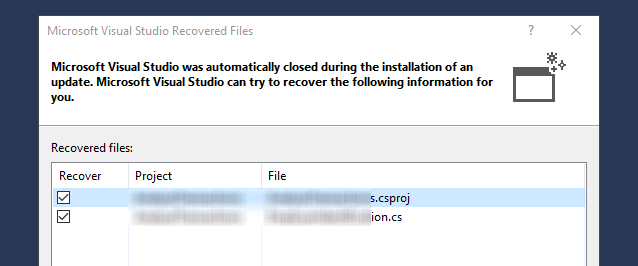

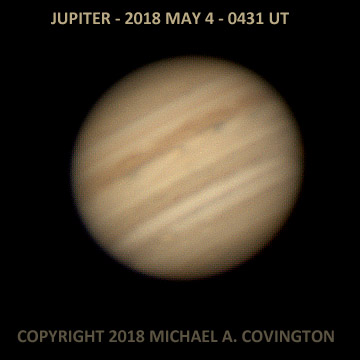

Yet another interruption, courtesy of Microsoft

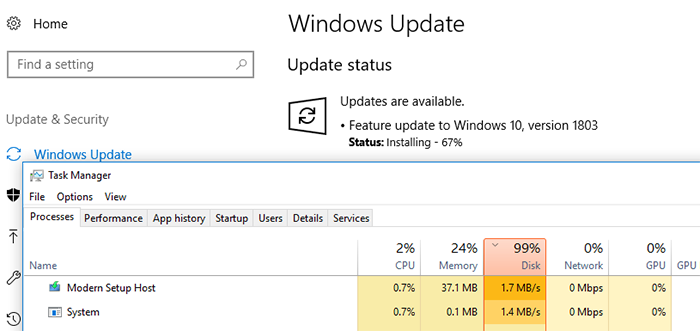

I was typing along in Visual Studio, on a client's rented Microsoft Azure virtual machine

running Windows Server 2016, and the editor stopped accepting keystrokes. Then this came up:

(I've blurred out my client's file names.)

Visual Studio "recovered," losing about the last 30 seconds of typing.

But what was it? Not a Windows Update; I checked for those before starting the work

session, and anyhow, the update history showed none installed today.

Not a Visual Studio update as far as I can tell; the version is still one from late 2017.

The event log showed that devenv.exe (Visual Studio) had crashed, and the whole file that I was

editing was included in the log as part of the error message!

I don't know what was going on. But the important thing is, this wasn't Windows 10, it was

Windows Server in an environment intended for serious business and scientific use,

and I had no indication of pending updates.

[Update:] "Automatically update extensions" was checked in Visual Studio (under Tools,

Options, Extensions and Updates; run Visual Studio as administrator to change this).

It must have been an update to an extension, maybe something specific to Azure.

I think I should have been asked, though!

[Further update:] Separate from this, there was a big Visual Studio update,

from 15.6.2 to 15.7.1, waiting for me. Silly me, updates are no longer under

"Extensions and Updates." They have moved to "Help," "Check for Updates."

So I'm updating 2 computers now.

Permanent link to this entry

|

2018

May

11

(Extra)

|

Ununoctium should have been Hectodecaoctium

One of the most barbaric assaults on the classical languages

in all of scientific terminology is the system of temporary

names for high-numbered chemical elements,

such as ununoctium for element 118.

In the first place, "one one eight" does not mean 118 in Latin.

Place value is an attribute of digits, not languages.

Second, even if "one one eight" were what they wanted to say, they

seem to be clipping off parts of words. The combining stem of unus

is uno- or uni-, not un-.

Latin doesn't combine words, especially numerals, as freely as Greek,

so let's go over to Greek...

and there we find the problem already solved; Latinized Greek numeric

prefixes are commonly used in the Metric System and other scientific terminology.

Element 118 should be hecto-deca-octium (hyphens optional).

That is a longer word, but it has the advantage of making sense!

Permanent link to this entry

|

2018

May

11

|

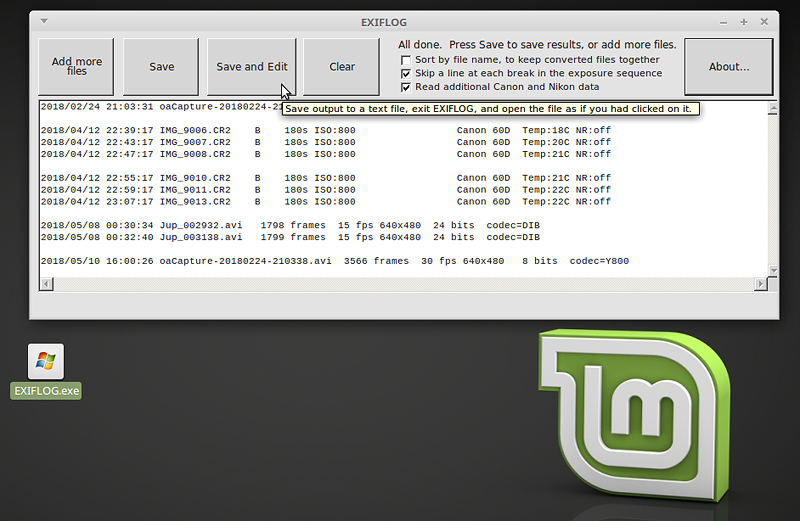

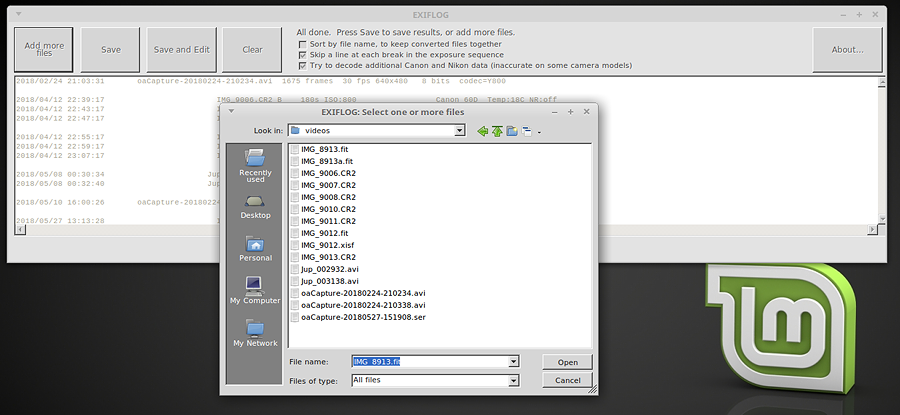

Important update to EXIFLOG

Versions 3 and 3.1 of EXIFLOG, my photo-logging software, had a couple of bugs that I've just fixed.

Most seriously, EXIFLOG would understate the number of frames in some video files, especially those

created with oaCapture, due to reading the wrong EXIF field. There was also some missing functionality

regarding output format — now you can get the output sorted by file name if you want, to keep

related files together.

Click here to get the new version, which is free.

More good news: I am now using EXIFLOG 2.5 (not 3.2) regularly under Linux.

It is a WinForms app that runs under Mono. More information is on the EXIFLOG web page.

Here you see it running under Linux Mint.

Even "Save and Edit" works.

Update: And now the current version is 3.3, with support for FITS, XISF, and SER added.

Permanent link to this entry

|

2018

May

10

|

Jupiter

Jupiter from last night (around 12:30 a.m. EDT).

The same 8-inch telescope and DFK camera, but this time with a 2× instead

of 3× extender. You can see that the resolution of the camera was a limiting

factor here — I should have had more magnification ahead of it.

This picture is processed to slightly higher contrast than other recent ones since it

contains so much fine detail. Also, the fine detail is close enough to the camera's

resolution limit that it wasn't feasible to take out the low-level noise.

I am finding that setting up on the grass rather than the concrete has completely

solved my problem with terra non firma. I can walk quite close to the telescope

and not see any motion.

Also, using oaCapture in Linux, I was actually able to capture 60 frames per second.

That means that in slightly less than 2 minutes I got more than 7000 frames of video!

And that led to a discovery: my homemade program EXIFLOG

doesn't report the number of video frames correctly. I'm working on that.

Permanent link to this entry

Defenestration

Cathy suggests the very apt term defenestration for the abandonment of Microsoft Windows.

I've had yet another upgrade-related problem, although perhaps it should be viewed as a

security improvement: a client that has had the May 8 update can no longer RDP into a host that

is lacking a somewhat earlier update. Since many research computers are seldom rebooted and thus

don't get updates that require rebooting, this is noticeable.

Linux is no paradise. It is a labyrinth; only the most common software installs automatically

and easily. But when things go wrong, you're allowed to know what they are, and it's possible

to correct them!

macOS (formerly Mac OS, maybe MacOS) is smooth enough, but only the most common software exists

unless you start using it like UNIX, in which case it's like Linux, but more expensive.

Permanent link to this entry

|

2018

May

9

|

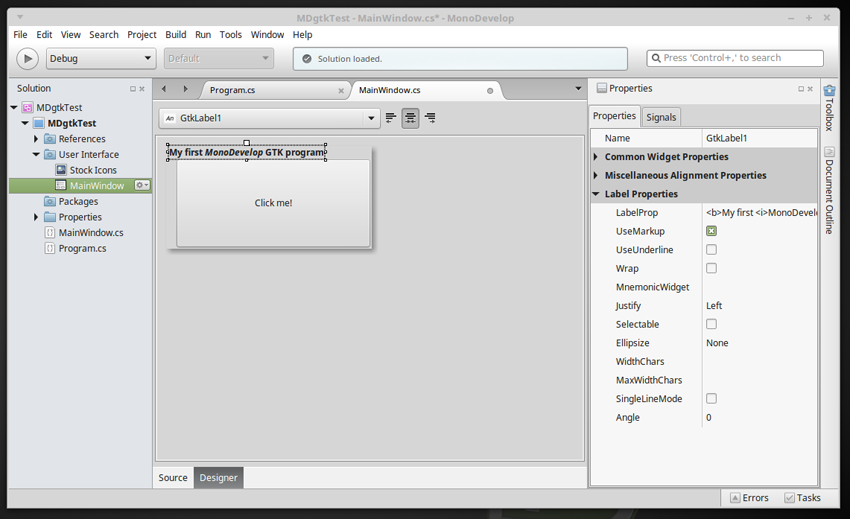

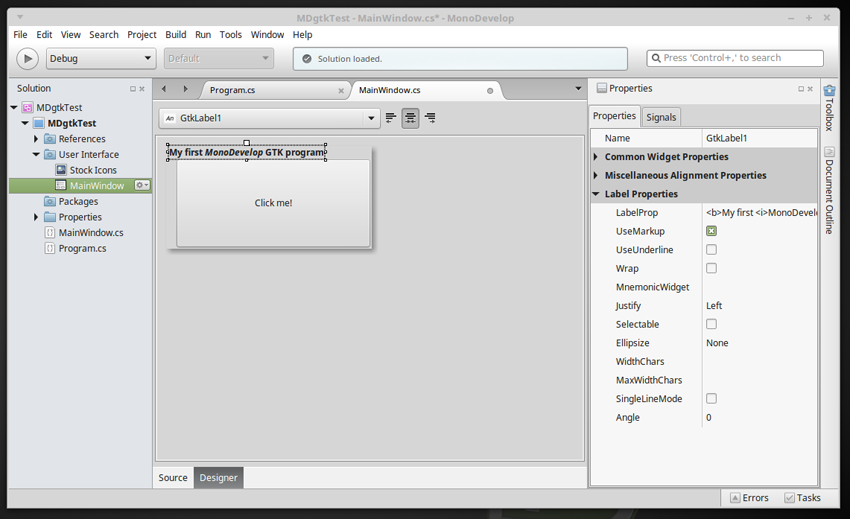

A promising IDE for Linux, Mac, and Windows: MonoDevelop

One of the things that have kept me tied to Microsoft Windows over the years has

been the availability of very good tools for developing windowed software, as well

as the excellent programming language C#.

I moved through Visual Basic (the environment that made Windows programming possible

for those who didn't want to do something that felt like wrestling octopuses),

Delphi, and then Visual Studio with C# and its WinForms window system, later supplanted

by WPF.

Under Linux, I occasionally used Java with Swing, and thought about using Python with TCL/TK,

but never really dug in.

Well... With Microsoft's encouragement (to make C# a portable programming language),

the language, the .NET runtime, and the WinForms window system have been ported

to Linux and the Mac (and, for good measure, also implemented in Windows) by

the Mono project.

What caught my attention today is

MonoDevelop,

a development environment similar to Visual Studio and possibly even better.

It's available for Windows, Linux, and Mac.

My understanding is that Visual Studio for Mac is essentially the same product remarketed.

Initial impressions are very good. I haven't used it enough for this to be considered a

full review. Under Linux, it generates .exe files that run with Mono.

Without recompilation, if they are command-line apps they also run under Windows.

If they are GTK graphical apps, some changes need to be made to make them portable —

I'll dig into that.

The windowing system that is fully supported,

with a graphical layout editor, is GTK, or more precisely gtk#, the most widely

used window system in Linux. I am just digging into it.

The important thing is, now I have a quick and easy way to write programs for Linux and Mac

with a graphical user interface. As soon as I get the kinks out of GTK portability, it will

be good for all three platforms.

Another way to develop three-platform programs is to develop them under Windows using WinForms,

then use Mono to run them under Linux and macOS. If you do that, you can edit, compile, and

run them with MonoDevelop, but there isn't a WinForms window editor.

Not quite all of WinForms is supported, but there's a utility (MoMA) to tell you what

the difficulties are.

Word to the wise: When you install monodevelop under Linux, be sure

also to install mono-dev. Otherwise nothing will compile.

And yes, I know neither Mono nor MonoDevelop is new.

That's the way I like it! For tools that I'm going to rely on, I want something that

has been out for several years, not something that is still in beta.

Finally, see what I wrote a while back about

the importance of LOBIS software.

Not everything is a game or a mass-market smartphone app.

Permanent link to this entry

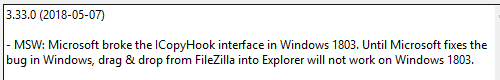

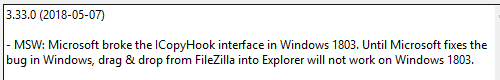

Drag-and-drop problems in Windows 10 Feature Update 1803

A message that just popped up in FileZilla:

Sure enough, I cannot drag and drop from the remote (right-hand) window of FileZilla

to the desktop, even though I've had the May 8 update after Feature Update 1803.

Microsoft, we expect better than this!

I'm sure a fix is coming, but it's not acceptable to break things that work,

and then fix them a few weeks later. We need our computers all the time, not

just intermittently.

This bug was fixed by May 26; I don't

know which update took care of it.

Permanent link to this entry

|

2018

May

8

|

Jupiter, in haste

Here's Jupiter at 12:30 a.m. on May 8, with the Great Red Spot at the lower left

and the satellite Io in front of Jupiter to the upper right of center.

The reason Io looks so odd is that we are seeing its sunlit face, almost the

same color as Jupiter, plus a small part of its shadow on Jupiter below it.

Taken in great haste as I was dodging clouds. Celestron 8 EdgeHD, 3x extender,

DFK camera, stack of the best 50% of about 1200 video frames.

Recorded with FireCapture and processed with AutoStakkert 3 and PixInsight.

Permanent link to this entry

|

2018

May

6

|

If the Apollo moon landing were fake...

This came up in a Facebook conversation...

Suppose the Apollo 11 moon landing were fake.

If so, its scientific results must have been fake also

(moon rocks,

various measurements, and close-up pictures of things not visible

from earth). And that would have been tricky.

The fakers would surely have been keenly aware that better scientific

research was coming soon — if not genuine landings, then space

probes, and if not that, then at least better multiwavelength ground-based

observations. (Telescope images from earth are 5 to 10 times sharper than

they were in 1969, and cover a wider band of wavelengths, due to better

image sensors. And not all the good telescopes are in government hands;

even amateurs with backyard telescopes

regularly get lunar and planetary images better than the

big observatories could obtain back then.)

If a later observation were to show that the moon rocks that Apollo 11

"brought back" were nothing like the real surface of the moon —

or if later high-resolution images did not match "photographs" from Apollo —

then they'd be in trouble.

That is one of many reasons I don't think the Apollo landings were fake.

Using the technology of 1969, it would actually be easier to do a real one

than to produce a convincing fake. Remember, they had excellent aircraft and

rockets but no digital image processing.

Besides, as someone else pointed out, why fake so many landings?

If the landing were fake, why not do just one and quit while you're ahead?

Why keep producing evidence that might be used against you?

Permanent link to this entry

English language mangled

The following specimen of weird English is from an e-mail message that I

received from an obvious scammer. Enjoy trying to read it.

This is concerning your total claims which you have suffered enough in receiving from the paying bank which has passed through final verification for final release and behold it has been noticed that you did no contract under any of the mentioned government contract which must have leaded you in earning such huge amount of funds respectively.

This top secret leaked during the traces of your last payment transmission to recover any last money laundering clearance issued to you as it was not in record if you have been in any of the countries your claims is coming from thereby raising suspicion on source of your claims.

Verily, we the FBI have come across such situation of your case which lead to jailing the violators but in your case I have personally found out that you must have been used to stand chances of receiving over invoiced contract funds or stand as a next of kin to a deceased whose deposit does not have a heir in the bank records where the deceased funds was deposited in UK, Asia and Africa. The initiator of such business to you may have resigned or retrenched from job thereby jeopardizing him/her from the chances of accomplishing his desired deal with you.

Do not panic about this information because I am here to work it out with you as I know what could be done for us to have the total funds released to you while we share the funds to a mutual agreed percentage afterwards if you can follow my instructions.

However, I have not revealed to the board of FBI on this leakage of your deal which will be subjected to criminal offense and fraudulent act against the Banks concerned due to my interest in getting the funds shared with you.

Please be honest with me as you should know that I have came to find out the sources of this claims to you and I promise to you that no other official will know about this if we can act fast and have the funds released for our sharing.

Verily!

Permanent link to this entry

|

2018

May

5

|

Getting the latest Stellarium under Linux

If you obtain Stellarium through the Ubuntu or Linux Mint software utility,

at present you don't get the latest version (0.18). Instead, you get an

older version that is in the Ubuntu library.

To get the latest Stellarium, follow the

instructions at this web site.

In brief:

sudo add-apt-repository ppa:stellarium/stellarium-releases

will tell Linux where to get it, and then, if you already have Stellarium,

you can update in the normal manner.

Otherwise, install as follows:

sudo apt-get update

sudo apt-get install stellarium

Permanent link to this entry

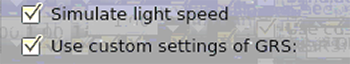

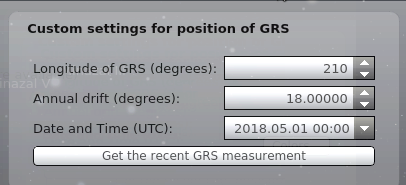

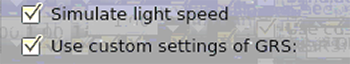

Getting the Great Red Spot right in Stellarium

Note: A fix for this bug has been entered into Stellarium's source code

repository. As far as I know it is not in a released version, but will be soon,

probably in version 0.18.2. It had to do with taking into account the position

of the Great Red Spot on the picture/map of Jupiter that is used to generate the image.

As installed, Stellarium shows Jupiter's Great Red Spot in the wrong place.

This is probably because of unpredictable drift over several years that has not

been taken into account.

Fortunately, customization of the Great Red Spot (GRS) is now on the Sky and Viewing Options

menu. You no longer have to edit configuration files.

Unfortunately, it doesn't seem to work as intended! If you go to JUPOS for current

observations of its position (which you can do by just clicking a button), you get

the current System II longitude of the GRS, which is about 290.

But to get it to show up in the right place, I had to enter its longitude as 210.

Here are the settings I am using, which should work (this summer, at least) for observers

anywhere in the world:

"Simulate light speed" says to take account of the half hour or more that is needed for

light to reach us from Jupiter. That's enough to affect the apparent position of the

Great Red Spot substantially.

(I regret the poor quality of the screen shots; Stellarium is maddeningly hard to take

screen shots of because the windows become dim and transparent when they lose the focus.)

Permanent link to this entry

|

2018

May

4

|

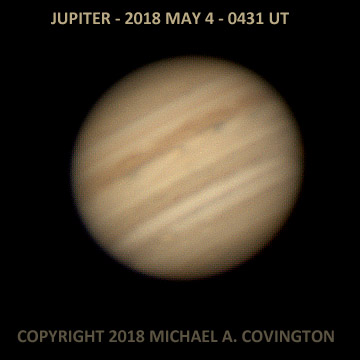

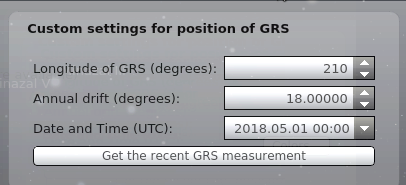

Jupiter

This is not a world-class picture of Jupiter, but I'm getting decent results

with some consistency. Celestron 8 EdgeHD, 3× converter, DMK21AU04 camera,

stack of the best 80% of about 3600 video frames, collected with oaCapture and

processed with AutoStakkert 2 and PixInsight.

Permanent link to this entry

Fast file transfer from PC to PC

I've hit upon a fast way to transfer these big video files from one PC to another:

an SD memory card in the computer's SD card slot.

At least in a quick test, this was appreciably faster than USB flash drives

or a USB file transfer cable (not a late model), and considerably faster than the

computer network (the transfer would have involved SMB under Linux, which is not

optimal).

There may be another good way to do it, but SD cards certainly work well.

Additional note:

If there are going to be files larger than 4 GB, the card needs to be formatted

as exFAT rather than FAT32. That also apparently makes it transfer data faster.

Another note:

Linux does not properly account for Daylight Saving Time in the timestamps in

the exFAT filesystem. For file transfer, it is better to format the card

(under Windows) as NTFS with a 16-kB allocation unit.

Then it cannot be used in digital cameras (unless reformatted),

but Windows and Linux can read and write it correctly.

The exFAT filesystem bug has been corrected by the developers but apparently

has not propagated into Linux Mint yet. (August 30, 2018)

Permanent link to this entry

|

2018

May

3

|

Two-finger tap on touchpad = right-click?

We always learn more about how to use one operating system by learning

to use another.

I do a lot of right-clicking, mainly for "Open in new window" and "Open with...".

Until now, I've been doing it on a touchpad awkwardly, by holding down the

right button while tapping with a finger.

That gets my hands tired.

In Linux, the way you right-click on a touchpad is to tap with two fingers.

Aha! Much easier.

In Windows this may or may not be an option in your gesture settings;

to make it work with my Synaptics touchpad, I had to use

the

registry setting here,

which does not seem to work in quite all contexts,

but it's a lot better than nothing.

Permanent link to this entry

Custom /dev/video names for cameras in Linux

On my autoguiding computer, I found Linux vacillating as to whether the

DMK video camera was /dev/video1 or /dev/video2,

depending, perhaps, on choice of USB ports.

So, at the suggestion of Patrick Chevalley (one of the heroes of free

astronomy software), I created and installed this file:

# This file is /etc/udev/99-video-link.rules

# As suggested by Patrick Chevalley,

# this file sets up custom /dev/video links

# for my cameras.

# The names /dev/videoDMK, /dev/videoDFK become synonyms

# for /dev/video1, /dev/video2, or whatever the camera is

# assigned to when it connects.

# The strings for recognition, "DMx 21AU04.AS" etc.,

# are the name of the camera as shown in oaCapture.

KERNEL=="video[0-9]*", ATTR{name}=="DMx 21AU04.AS", SYMLINK+="videoDMK"

KERNEL=="video[0-9]*", ATTR{name}=="DFx 21AU04.AS", SYMLINK+="videoDFK"

The files in /etc/udev tell Linux what to do when a new device

is discovered. If you have oaCapture or KStars, you already have some

files there for dealing with video cameras. What this file does — executed

last, hence the name beginning with 99 — is to look for devices already

there with the names "DMx 21AU04.AS" and "DFx 21AU04.AS" respectively, and

make symbolic links to them as /dev/videoDMK and /dev/videoDFK.

Naturally, you should customize this for your camera or cameras.

Permanent link to this entry

|

2018

May

2

|

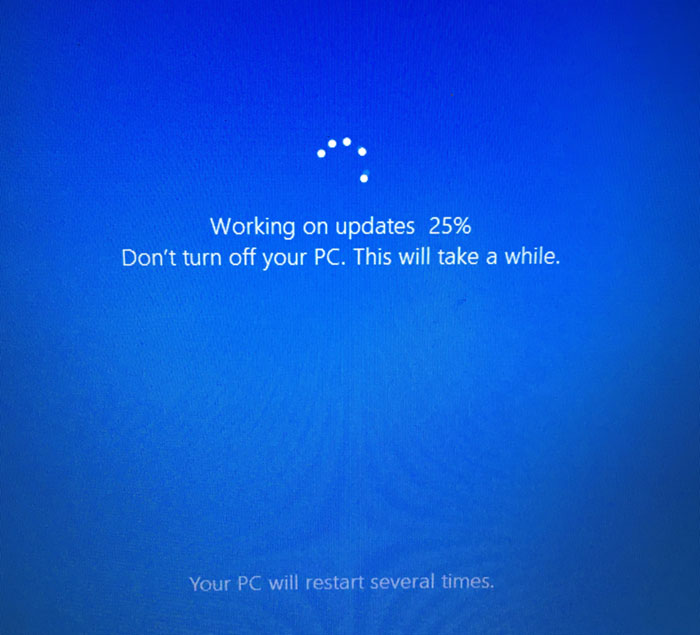

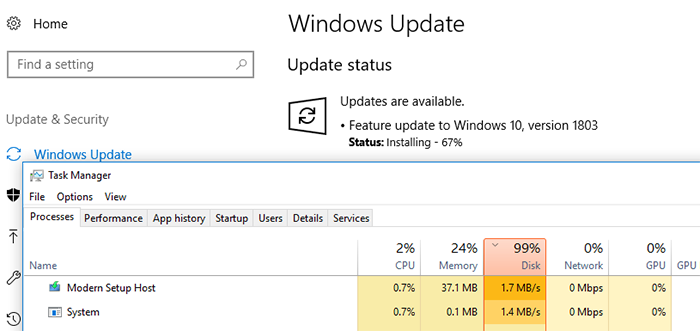

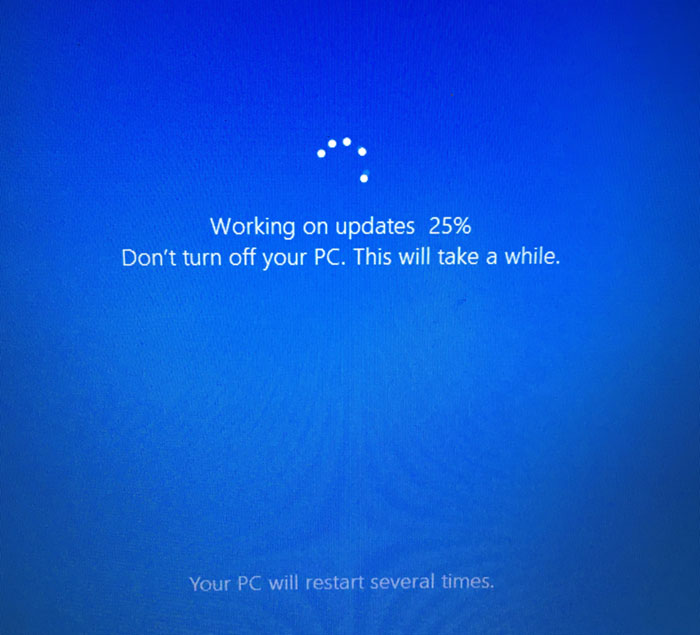

Windows 10 Feature Update 1803 —

Error, mistake, misfortune, or calamity?

I have no idea what went wrong.

Other people did not have this experience...

As you know, Windows 10 periodically has feature updates such as Creators Update,

which are free and fairly substantial new versions of the operating system

released about twice a year.

One of them came out today...

...and my three Windows 10 computers were all out of commission for several hours.

I did mathematical research for two and a half hours with paper and pencil (and a library

full of books) while my Lenovo laptop did part of the installation.

Total download and install time on the Lenovo was about six hours,

and on the Asus laptop, about three. The Dell desktop got off easy, with just

a couple of hours of incapacity.

For about four hours the Lenovo was "installing" and showed 95% to 100% disk

utilization by the installer, which made other usage almost impossible.

(During this, I was able to use it to

RDP to a remote Windows server and do a bit of work that way; RDP does not

use the client machine's disk. Web browsers do, and I was not even able to view web

pages locally.)

Then there were one to two hours of this:

(going from 0 to 100% several times, of course)

and then, finally, the new OS version was ready.

This is the first Feature Update where I was not asked whether to install it.

I did a "Check for Updates" and there is was, downloading and installing without my consent.

I am particularly concerned by the Lenovo laptop that was incapacitated for about six hours.

Even two or three hours are too much, but six? What was it doing with 100% disk usage

for about four hours? Some kind of malware scan?

As I said on Facebook today, "Dear Mr. Microsoft: This is costing me money!"

I have never been a great fan of Linux, but from now on, every Windows computer that I

possess will dual-boot Linux.

I know it will slow down the re-starting of Windows because there will be a GRUB menu,

but that's how it needs to be.

The next time Windows becomes non compos mentis, I want to be able to use an alternative OS

to get at least a little work done.

Until now I've used Linux mainly in a VM under Windows.

That won't do when Windows is incapacitated.

What is Feature Update 1803, by the way?

A rather minor upgrade, which is why I didn't think it would take long.

Some functionality relating to pictures and Bluetooth is added.

"Homegroup" has been taken away, which is just as well because it was a mistaken idea;

workgroups already did what was needed.

You can choose custom background colors in Home Edition, which is good...

The "Type here to search" box at the bottom is light, not dark.

If you change your "default app colors" from "Light" to "Dark" (deep within Settings),

it goes back to being dark, and some other things, such as Windows Settings, come up

dark too. Recall that

I have Cortana disabled.

I may decide not to display the search box at all.

So it was a lot of trouble for just a little improvement.

Update: After some pondering, I decided to roll back my astronomy-camera laptop (only)

to 1709 and to mark all of its Wi-Fi connections as "metered," which limits the amount of

Windows updating that will take place. There is no way I can guarantee it is stable,

but that will help. And I am moving nearly all image acquisition and autoguiding to Linux.

Permanent link to this entry

|

|

|

This is a private web page,

not hosted or sponsored by the University of Georgia.

Copyright 2018 Michael A. Covington.

Caching by search engines is permitted.

To go to the latest entry every day, bookmark

http://www.covingtoninnovations.com/michael/blog/Default.asp

and if you get the previous month, tell your browser to refresh.

Portrait at top of page by Krystina Francis.

Entries are most often uploaded around 0000 UT on the date given, which is the previous

evening in the United States. When I'm busy, entries are generally shorter and are

uploaded as much as a whole day in advance.

Minor corrections are often uploaded the following day. If you see a minor error,

please look again a day later to see if it has been corrected.

In compliance with U.S. FTC guidelines,

I am glad to point out that unless explicitly

indicated, I do not receive substantial payments, free merchandise, or other remuneration

for reviewing or mentioning products on this web site.

Any remuneration valued at more than about $10 will always be mentioned here,

and in any case my writing about products and dealers is always truthful.

Reviewed

products are usually things I purchased for my own use, or occasionally items

lent to me briefly by manufacturers and described as such.

I am an Amazon Associate, and almost all of my links to Amazon.com pay me a commission

if you make a purchase. This of course does not determine which items I recommend, since

I can get a commission on anything they sell.

|

|